In this week’s free newsletter, I explained how bad the circular AI economy is in the simplest-possible terms:

Anthropic not have money to pay big cloud bills, because Anthropic company cost lots of money, more money than Anthropic make! So Anthropic only PAY cloud bills if OTHERS give it money! Amazon GIVE MONEY to Anthropic to GIVE BACK TO AMAZON, which mean no profit! And Amazon not give Anthropic enough money to pay it, so Anthropic have to ask OTHERS for money! That BAD! It mean BUSINESS not STABLE, and CLIENT not STABLE.

This bad when client MOST OF AI MONEY!

This ALSO mean that Anthropic RELIANT on OTHERS to pay AMAZON, which make AMAZON dependent on VENTURE CAPITAL for FUTURE REVENUE! Amazon SAY it have BIG BUSINESS, but BIG BUSINESS dependent on ANTHROPIC, which mean BIG BUSINESS dependent on VENTURE CAPITAL!

This SAME for GOOGLE! Both say they have BIG CLIENT, but BIG CLIENT MONEY not supported by REVENUE, so BIG CLIENT actually mean “HOW MUCH VENTURE CAPITAL MONEY ANTHROPIC HAVE.”

This bad business!

Sidenote: Me know you say “ANTHROPIC STOCK WORTH BIG MONEY,” but me need you remember how much capex Amazon and Google spend! Even if Anthropic stake worth $200 Billion, Amazon and Google still spend MANY more dollar than that on capex! And stake so BIG that neither able to SELL ALL. Only make gain on PAPER, which not REAL MONEY!

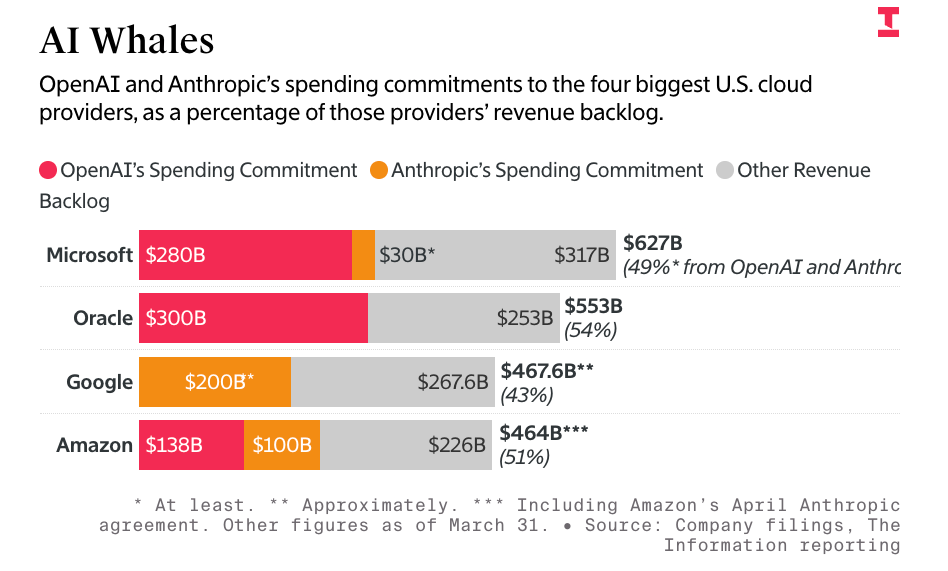

In other words, the entire AI economy effectively comes down to Anthropic and OpenAI, who take up at least 70% of Amazon’s Google’s, and Microsoft’s compute capacity, 70% to 80% of their AI revenues and 50% of their entire revenue backlog, per The Information:

That’s $748 billion of the entire revenue backlog — not just AI compute — that’s dependent on Anthropic and OpenAI, two companies that cannot afford to pay these bills without constant venture capital infusions from either investors or the hyperscalers themselves.

This is a big problem, because Anthropic seems to be losing so much money that it had to raise $10 billion from Google, $5 billion from Amazon, and is reportedly trying to raise another $50 billion from investors, less than three months after it raised $30 billion on February 12, 2026, which was five months after it raised $13 billion in September 2025. That’s $58 billion in eight months, with the potential to reach $108 billion.

Now Anthropic is taking over all 300MW of SpaceX/xAI/Elon Musk’s Colossus-1 data center, which will likely cost somewhere in the region of $2.5 billion to $3.5 billion a year, given that most of the data center is made up of H100 and H200 GPUs (with around 30,000 GB200 GPUs).

I also don’t think people realize how bad a sign this is for the larger AI economy.

SpaceX and Anthropic’s Compute Deal Shows That There’s Little Demand Outside of Anthropic and OpenAI For GPUs

Musk built the 300MW Colossus-1 to be “the most powerful AI training system in the world,” specifically saying that it was built “for training Grok,” with inference handled through Oracle (which originally earmarked Abilene for Musk but didn’t move fast enough for him) and other cloud providers. xAI, as one of the largest non-big-two providers, had so little need for AI capacity that it was able to hand off the entirety of its self-built data center capacity to Anthropic.

If xAI doesn’t need 300MW of compute capacity that it spent at least $4 billion to build, who, exactly, are the other large customers for AI compute? I’m not even being facetious. I truly don’t know, I can’t find them, I spent most of last week looking for them, and the only answer I had a week ago was “Elon Musk buying a lot of compute for xAI to make the freaks on the Grok Subreddit able to generate pornography.”

xAI is also the only non-OpenAI/Anthropic AI lab that’s built its own capacity, capacity it clearly didn’t need, which begs the question as to why Musk needs however much capacity he’ll build at Colossus-2. Musk claims that xAI had moved all training to Colossus-2, but also that xAI would “provide compute to AI companies that are taking the right steps to ensure it is good for humanity.” This apparently includes Anthropic, which Musk called “misanthropic and evil” a little over two months ago. Researchers believe that the actual capacity of Colossus-2 is 350MW.

At $2.5bn a year or so, Anthropic will be effectively the entirety of xAI’s revenue, which was at around $107 million in the third quarter of 2025.

To put this very, very simply: xAI should, in theory, have massive demand for AI compute, but its demand is apparently so small that it can flog a multi-billion-dollar data center to a competitor.

Sightline Climate found that 15.2GW of capacity is under construction and due to be completed by the end of 2027, and at this point I’m not sure anybody can make a compelling argument as to why it’s being built or who it’s for.

Who needs it? Who are the customers? Who is buying AI compute at such a scale that it would warrant so much construction? Where is the demand coming from if it’s not OpenAI and Anthropic?

These questions shouldn’t be that hard to answer, but trust me, I’ve tried and cannot find a GPU compute customer larger than $100 million a year, and honestly, that customer was xAI.

Through many hours of research, I’ve found that the vast majority — as much as 95% — of all compute demand comes from a few places:

- Meta, for reasons that defy logic.

- Microsoft, for OpenAI’s compute.

- Google, for Anthropic’s compute.

- Amazon, for Anthropic.

- OpenAI.

- Anthropic.

Otherwise, every data center deal you’ve ever read about is for a theoretical future customer or an unnamed “anchor tenant” that gives them “guaranteed, pre-committed occupancy” without being identified in any way.

Yet even that “pre-committed” language seems to be something of a myth, which I’ve chased down to a report from real estate firm JLL, who says that 92% of capacity currently under construction is precommitted through binding lease agreements or owner-occupied development. CBRE said it was 74.3% for the first half of 2025, and Cushman & Wakefield said it was 89%, though it also said that there was 25.3GW of capacity under construction, while Sightline sees 19.8GW under construction through the end of 2030.

And man, I cannot express how fucking difficult it is to find actual data center customers outside of the ones I’ve named above. In fact, it’s pretty difficult to find any customers for GPU compute not named Anthropic, OpenAI, Microsoft, Google, Meta or Amazon.

90%+ Of All AI Software and Compute Revenues Go Through Anthropic or OpenAI

Outside of OpenAI and Anthropic, effectively no AI software makes more than a few hundred million dollars a year, and to make that money, they have to spend it on tokens generated by models run by one of those two companies.

When those companies generate those tokens, they then flow to one of a few infrastructure providers — I’ll get to the breakdown shortly — to rent out GPUs.

As I’ve discussed this week, at least 75% of Microsoft, Google and Amazon’s AI revenues come from OpenAI or Anthropic, and that’s before you count the money that Microsoft, Google and Amazon make reselling models from both companies.

To get specific, The Information reports that Anthropic will pay around $1.6 billion to Amazon for reselling its models. OpenAI, per my own reporting, sent Microsoft $659 million as part of its revenue share.

AI startups — all of whom are terribly unprofitable — predominantly spend their funding on models sold by OpenAI and Anthropic. Per Newcomer, as of August last year, Cursor was spending 100% of its revenue on Anthropic. Harvey, an AI tool for lawyers, raised $960 million between February 2025 and March 2026, with most of those costs flowing to Anthropic and OpenAI.

Effectively every AI startup is a feeder for API revenue for Anthropic or OpenAI, and as a result, almost every dollar of AI revenue flows to either Google, Microsoft or Amazon.

As Anthropic and OpenAI are extremely unprofitable, Google, Microsoft and Amazon then take that money and either re-invest it in OpenAI and Anthropic, as Google, Amazon and Microsoft have all done in the past few years.

The Devil’s Deal of OpenAI and Anthropic

At the beginning of the bubble, all three companies believed that OpenAI and Anthropic were golden geese that were, through the startups they inspired and powered, laying golden eggs that necessitated expanding their operations, leading them to spunk hundreds of billions of dollars in capex, with Amazon building the massive Project Rainier in Indiana for Anthropic and Microsoft the Atlanta and Wisconsin-based Fairwater data centers for OpenAI.

They likely also thought their own services would grow fast enough to warrant the expansion, or that other large GPU consumers would rear their heads.

That never happened. Instead, OpenAI grew bigger and more-demanding of Microsoft’s compute capacity, leading to Microsoft allowing it to seek other partners, in part (per The Information) because some executives believed OpenAI would die:

After striking the blockbuster deal in 2023, several top Microsoft executives told colleagues around that time that they thought OpenAI’s business would eventually fail, even if its technology was good, according to a former manager who discussed it with them.

By November 2025, OpenAI had signed a $300 billion deal with Oracle, a $22 billion deal with CoreWeave, a $38 billion deal with Amazon, and a theoretical deal with both AMD and NVIDIA.

Yet by this point, Microsoft realized it was in a bind, with the majority — at least 70% if not more of its AI revenues were dependent on OpenAI, but it had already walked away from 2GW of data center capacity to reduce its capex costs. It had also, as part of OpenAI’s conversion to a for-profit company, had convinced it to spend $250 billion in incremental revenue on Azure.

So Microsoft chose to start spreading out that capacity to neoclouds like Nebius and Nscale, effectively bankrolling their entire futures based on theoretical revenue from OpenAI, a company that plans to burn $852 billion in the next four years and cannot afford to pay any of its bills without continual subsidies. These companies were now part of a multi-threaded dependency that ultimately ended up at one place: OpenAI, which also makes up the vast majority of inference chip maker Cerebras’ revenue with its 3-year, $20 billion deal.

Meanwhile, Amazon and Google thought they had it made. Anthropic was growing, and its compute demands were reasonable enough that neither had to stretch themselves too thin…until the second quarter of 2025, when Anthropic’s accelerated growth led to it starting to push against the limits of Google and Amazon’s capacity.

So Google agreed to backstop several billion dollars behind two deals with Fluidstack, a brand new AI compute company, and Amazon continued expanding its Project Rainier data center.

Yet Anthropic’s hunger wasn’t sated. After mocking OpenAI in February 2026 for “YOLOing” into compute deals (and having signed a cloud deal with Microsoft), it massively expanded its AWS and Google Cloud deals, signed a deal with CoreWeave, and as I discussed above, took over the entirety of Musk’s Colossus-1 data center.

And all of this is only happening because, based on my analysis, very little actual demand for AI compute exists outside of OpenAI and Anthropic, and OpenAI and Anthropic only exist because of Microsoft, Google, and Amazon both building and expanding their infrastructure to cater to them.

In reality, OpenAI and Anthropic are the only meaningful companies in the AI industry. They are the majority of revenue, the majority of capacity and the majority of demand. Microsoft, Google and Amazon have exploited the desperation in a tech industry that’s run out of hypergrowth ideas, and created a near-imaginary industry by propping up both companies.

The mistake that most make in measuring the circularity of OpenAI and Anthropic is to focus entirely on the money raised — $13 billion from Microsoft and up to $50 billion from Amazon for OpenAI, and as much as $80 billion from Amazon and Google for Anthropic.

The correct analysis starts with measuring infrastructure. Based on discussions with sources and analysis of multiple years of reporting, I estimate that of the roughly $700 billion in capex spent by Google, Meta and Microsoft since 2023, at least 5.5GW of capacity costing at least $300 billion has been built entirely for two companies. This has in turn inflated sales through multiple counterparties involving NVIDIA, ODMs like Quanta, Foxconn, Supermicro and Dell, and created a form of market-driven AI psychosis that inspired Meta to burn over $158 billion in three years and the entire world to convince itself that AI was the biggest thing ever.

The reason that there isn’t another OpenAI or Anthropic is that Google, Microsoft, and Amazon bankrolled their entire infrastructure, fed them billions of dollars, and then charged them discount rates for their early compute, with sources telling me that Anthropic pays vastly below-market-rates for Trainium compute from Amazon, and The Information reporting that OpenAI was paying $1.30-per-A100-per hour in 2024, or at or around the cost of running them.

By sacrificing their entire infrastructure to OpenAI and Anthropic, the hyperscalers created the illusion of demand by feeding themselves money, all while buying endless GPUs and TPUs to fill further data centers for two customers, both of whom paid discount rates that lost them money.

This capex bacchanalia gave all three companies a massive boost to their stock prices, so they kept going, even though there wasn’t really demand other than for Anthropic or OpenAI, two companies that they had to constantly cater to with investment capital and server maintenance.

The belief became that all you had to do was plan to build a data center and you’d print money, boosting NVIDIA’s sales and associated counterparties in memory stocks like Sandisk. Except that never happened.

Every data center provider that doesn’t have an Anthropic, OpenAI, or Meta-related contract makes pathetic amounts of revenue that can barely keep up with their debt. AI startups make meager revenues, and lose multitudes more than they can ever hope to make.

The entire AI industry relies upon two companies that expect to burn at least $1 trillion in the next four years, with Anthropic, the supposed “compute-conscious” AI company, committing to at least $330 billion in spend in the next few years.

Where does that money come from, exactly? Because neither of these companies have anything approaching a path to profitability.

Based on a deep analysis of every publicly-available source on AI compute, I can find only two significant — over $100 million a year — purchasers of AI compute outside of Anthropic, OpenAI, Meta, or associated parties like NVIDIA, Microsoft, Google and Amazon. Those two are Poolside, which reportedly spends $400 million a year, an untenable position as it only raised $500 million in total funding before its $2 billion in funding collapsed earlier this year, and Perplexity, which appears to spend some amount of money with CoreWeave and Microsoft Azure. Both run at a massive loss.

Nowhere is this lack of true demand more obvious than in the neoclouds, which only seem capable of signing big deals with Anthropic, OpenAI, Microsoft (for OpenAI), and Google (for OpenAI). Oh, and Meta, who is doing this because the existence of ChatGPT gave Mark Zuckerberg such profound AI psychosis that he’s made Meta build him a CEO chatbot to talk to and burned over $150 billion.

The AI industry is a brittle, circular economy, one only made possible by a lack of financial regulation and a tech industry that’s run out of ideas. Without hyperscalers propping up OpenAI and Anthropic, there would be no reason to buy so many GPUs or build so many data centers, and neoclouds would have no reason to exist.

This is a giant con, a giant illusion, and a giant mistake.

Coming Up On This Week’s Where’s Your Ed At Premium…

- 90%+ of all AI revenues flow through Anthropic and OpenAI.

- 90%+ of all AI compute demand comes from Anthropic, OpenAI, Meta, or associated counterparties like Google and Amazon buying compute for Anthropic or OpenAI.

- The vast majority of AI operations don’t require more than a few hundred to a thousand GPUs for inference, and at most 20,000 GPUs for training models.

- This means that for the 15.2GW of data centers under construction before 2027 ($157 billion in annual revenue) to make sense, thousands of companies will have to rent hundreds or thousands of GPUs.

- This also means that the DeepSeek problem — the reason that everybody freaked out in January 2025 — is actually industry-wide.

- More than 50% of Microsoft, Google, Amazon, CoreWeave, and Oracle’s entire revenue backlogs are from OpenAI and Anthropic.

- Neoclouds are unsustainable, imaginary businesses only made possible by continual subsidies from NVIDIA and the compute demands of OpenAI, Anthropic and Meta.

- Outside of Anthropic and OpenAI, only around $13 billion in AI compute demand exists, with much of it taken up by Meta and NVIDIA backstopping neoclouds like CoreWeave and IREN.

- ODMs like Supermicro, Dell, Quanta and Foxconn are largely dependent on AI server revenues that largely flow through OpenAI and Anthropic’s counterparties to fuel their server demand.