Editor's Note: Apologies if you received this email twice - we had an issue with our mail server that meant it was hitting spam in many cases!

Hi! If you like this piece and want to support my work, please subscribe to my premium newsletter. It’s $70 a year, or $7 a month, and in return you get a weekly newsletter that’s usually anywhere from 5000 to 185,000 words, including vast, extremely detailed analyses of NVIDIA, Anthropic and OpenAI’s finances, and the AI bubble writ large. I just put out a massive Hater’s Guide To Private Equity and one about both Oracle and Microsoft in the last month.

I am regularly several steps ahead in my coverage, and you get an absolute ton of value, several books’ worth of content a year in fact!. In the bottom right hand corner of your screen you’ll see a red circle — click that and select either monthly or annual. Next year I expect to expand to other areas too. It’ll be great. You’re gonna love it.

Soundtrack - The Dillinger Escape Plan - Unretrofied

So, last week the AI boom wilted brutally under the weight of an NVIDIA earnings that beat earnings but didn’t make anybody feel better about the overall stability of the industry. Worse still, NVIDIA’s earnings also mentioned $27bn in cloud commitments — literally paying its customers to rent the chips it sells, heavily suggesting that there isn’t the underlying revenue.

A day later, CoreWeave posted its Q4 FY2025 earnings, where it posted a loss of 89 cents per share, with $1.57bn in revenue and an operating margin of negative 6% for the quarter. Its 10-K only just came out the day before I went to press, and I’ve been pretty sick, so I haven’t had a chance to look at it deeply yet. That being said, it confirms that 67% of its revenue comes from one customer (Microsoft).

Yet the underdiscussed part of CoreWeave’s earnings is that it had 850MW of power at the end of Q4, up from 590MW in Q3 2025 — an increase of 260MW…and a drop in revenue if you actually do the maths.

- In Q3 2025, CoreWeave had $1.36bn in revenue on 590MW of compute, working out to $2.3m per megawatt.

- In Q4 2025, CoreWeave had $1.57bn in revenue on 850MW of compute, working out to $1.847m per megawatt.

While this is a somewhat-inexact calculation — we don’t know exactly how much compute was producing revenue in the period, and when new capacity came online — it shows that CoreWeave’s underlying business appears to be weakening as it adds capacity, which is the opposite of how a business should run.

It also suggests CoreWeave's customers — which include Meta, OpenAI, Microsoft (for OpenAI), Google, and a $6.3bn backstop from NVIDIA for any unsold capacity through 2032 — are paying like absolute crap.

CoreWeave, as I’ve been warning about since March 2025, is a time bomb. Its operations are deeply-unprofitable and require massive amounts of capital expenditures ($10bn in 2025 alone to exist, a number that’s expected to double in 2026). It is burdened with punishing debt to make negative-margin revenue, even when it’s being earned from the wealthiest and most-prestigious names in the industry. Now it has to raise another $8.5bn to even fulfil its $14bn contract with Meta.

For FY2025, CoreWeave made $5.13bn in revenue, making a $46m loss in the process. The temptation is to suggest that margins might improve at some point, but considering it’s dropped from 17% (without debt) for FY2024 to negative 1% for FY2025, I only see proof to the contrary. In fact, CoreWeave’s margins have only decayed in the last four quarters, going from negative 3%, to 2%, to 4%, and now, back down to negative 6%.

This suggests a fundamental weakness in the business model of renting out GPUs, which brings into question the value of NVIDIA’s $68.13bn in Q4 FY2026 revenue, or indeed, Coreweave’s $66.8bn revenue backlog. Remember: CoreWeave is an NVIDIA-backed (and backstopped to the point that it’s guaranteeing CoreWeave’s lease payments) neocloud with every customer they could dream of.

I think it’s reasonable to ask whether NVIDIA might have sold hundreds of billions of dollars of GPUs that only ever lose money. Nebius — which counts Microsoft and Nebius as its customers — lost $249.6m on $227.7m of revenue in FY2025. No hyperscaler discloses their actual revenues from renting out these GPUs (or their own silicon), which is not something you do when things are going well.

Lots of people have come up with very complex ways of arguing we’re in a “supercycle” or “AI boom” or some such bullshit, so I’m condensing some of these talking points and the ways to counteract them:

- OpenAI had $13.1bn in revenue in 2025! They only lost $8bn !

- Did it? Based on my own reporting, which has been ignored (I guess it’s easier to do that than think about it?) by much of the press, OpenAI made $4.33bn through the end of September, and spent $8.67bn on inference in that period.

- Notice how I said “inference.” Training costs, data costs, and simply, the costs of doing business are in addition to that.

- OpenAI has 900m weekly active users!

- Yeah everybody is talking about AI 24/7 and ChatGPT is the one everybody talks about.

- Google Gemini Has 750m-

- Google changed Google Assistant to Gemini on literally everything, including Google Home, and force-fed it to users of Google Docs and Google Search.

- Claude Code is changing the world! It’s writing SaaS now! It’s replacing all coders!

- As I discussed both at the beginning of the Hater’s Guide To Private Equity and my free newsletter last week, software is not as simple as spitting out code, neither is it able to automatically clone the SaaS experience.

- Midwits and the illiterate claim that this somehow defeats my previous theses where I allegedly said the word “useless.” While I certainly goofed claiming generative AI had three quarters left in March 2024, my argument was that I thought that “generative AI [wouldn’t become] a society-altering technology, but another form of efficiency-driving cloud computing software that benefits a relatively small niche of people,” as I have said that people really do use them for coding.

- Even Claude Code, the second coming of Christ in the minds of some of Silicon Valley’s most concussed boosters, only made $203m in revenue ($2.5bn ARR) for a product that at times involves Anthropic spending anywhere from $8 to $13.50 for every dollar it makes.

- As I discussed both at the beginning of the Hater’s Guide To Private Equity and my free newsletter last week, software is not as simple as spitting out code, neither is it able to automatically clone the SaaS experience.

- People Doubted Amazon But It Made Lots Of Money In The End!

- No they didn’t. Benedict Evans defended Amazon’s business model. Jay Yarow of Business Insider defended it too. Practical Ecommerce called Amazon Web Services “Amazon’s cash cow” in October 2013. In April 2013, WIRED’s Marcus Wohlsen managed to name one skeptic — Paulo Santos, based in Portugal, who appears to have dropped off the map after 2024, but remained a hater long after AWS hit profitability in 2009. I cannot find any other skeptics of Amazon, and I cannot for the life of me find a single skeptic of AWS itself.

- AWS Cost A Lot Of Money So We Should Spend So Much Money On AI!

- I’m sick and fucking tired of this point so I went and did the work, which you can view here, to find every single year of capex that Amazon spent

- When you add together all of Amazon’s capital expenditures between 2002 and 2017, which encompasses its internal launch, 2006 public launch, and it becoming profitable in 2015, you get $37.8bn in total capex (or $52.1bn adjusted for inflation).

- For some context, OpenAI raised around $42bn in 2025 alone.

- The fact that we have multiple different supposedly well-informed journalists making the “Amazon spent lots of money!” point to this day is a sign that we’re fundamentally living in hell.

Anyway, let’s talk about how much OpenAI has raised, and how none of that makes sense either.

OpenAI’s $15bn, $35bn , or $110bn Round, Where Amazon Only Invested $15bn, and NVIDIA and SoftBank Are Paying In Installments — Stop Reporting That It Raised $110bn, It Is Factually Incorrect

Great news! If you don’t think about it for a second or read anything, OpenAI raised $110bn, with $50bn from Amazon, $30bn from NVIDIA and $30bn from SoftBank.

Well, okay, not really. Per The Information:

- OpenAI raised $15bn from Amazon, with $35bn contingent on AGI or an IPO.

- OpenAI got commitments from SoftBank and NVIDIA, who may or may not have committed to $30bn each, and will be paying in three installments.

- Please note that CNBC authoritatively reported in September that “the initial $10 billion tranche locked in at a $500 billion valuation was expected to close within a month” for a deal that was only ever a Letter of Intent. This is why it’s important not to report things as closed before they’re closed.

- As of right now, evidence suggests that nobody has actually sent OpenAI any money.

- Per NVIDIA’s 10-K filed last week, it is (and I quote) “...finalizing an investment and partnership agreement with OpenAI [and] there is no assurance that we will enter into an investment and partnership agreement with OpenAI or that a transaction will be completed.”

- It’s going to be interesting seeing how SoftBank funds this. It funded OpenAI’s last $7.5bn check with part of the proceeds from a $15bn, one-year-long bridge loan, and the remaining $22.5bn by selling its $5.83bn in NVIDIA stock and its $13.5bn margin loan using its ARM stock.

- Nevertheless, per its own statement, SoftBank intends to pay OpenAI $10bn on April 1 2026, July 1 2026, and October 1 2026, all out of the Vision Fund 2.

- Its statement also adds that “the Follow-on Investment is expected to be financed initially through bridge loans and other financing arrangements from major financial institutions, and subsequently replaced over time through the utilization of existing assets and other financing measures.

Yet again, the media is simply repeating what they’ve been told versus reading publicly-available information. Talking of The Information, they also reported that OpenAI intends to raise another $10bn from other investors, including selling the shares from the nonprofit entity:

OpenAI’s nonprofit entity, which has a stake in the for-profit OpenAI that’s now worth $180bn, may sell several billions of dollars of its shares to the financial investors, depending on the level of investment demand the for-profit receives in its fundraise, the person said. That would help other OpenAI shareholders avoid additional dilution of the value of their shares following the large equity fundraise.

It’s so cool that OpenAI is just looting its non-profit! Nobody seems to mind.

OpenAI’s Not-A-Ponzi-Scheme Revenues

Talking of things that nobody seems to mind, on Friday Sam Altman accidentally said the quiet part out loud, live on CNBC, when asked about the very obviously circular deals with NVIDIA, Amazon and Microsoft (emphasis mine):

ALTMAN: I get where the concern comes from, but I don’t think it matches my understanding of how this all works. This only makes sense if new revenue flows into the whole AI ecosystem. If people are not willing to pay for the services that we and others offer, if there’s not new economic value being committed, then the whole thing doesn’t work. And it would just it would be circular. But revenue for us, for other companies in the industry, is growing extremely quickly, and that’s how the whole thing works. Now, given the huge amounts of money that have to go into building out this infrastructure ahead of the revenue, there are various things where people, finance chips invest in each other’s companies and all of that, but that is like a financial engineering part of this and the whole thing relies on us going off – or other people going off and selling these products and services.

So as long as the revenue keeps growing, which it looks like it is – I mean, demand is just a huge part of my day is figuring out how we’re going to get more capacity and how we’re allocating the capacity we have. Then, I don’t think it looks circular, even though the need to finance this, given the huge amounts of money involved, does require a lot of parties to do deals together.

Hey Sam, what does “the whole thing” refer to here? Because I know you probably mean the AI industry, but this sounds exactly like a ponzi scheme!

Now, jokes aside, ponzi schemes work entirely through feeding investor money to other investors. OpenAI and AI companies are not a ponzi scheme. There’s real revenues, people are paying it money. Much like NVIDIA isn’t Enron, OpenAI isn’t a ponzi scheme.

However, the way that OpenAI describes the AI industry sure does sound like a scam. It’s very obvious that neither OpenAI nor its peers have any plan to make any of this work beyond saying “well we’ll just keep making more money,” and I’m being quite literal, per The Information:

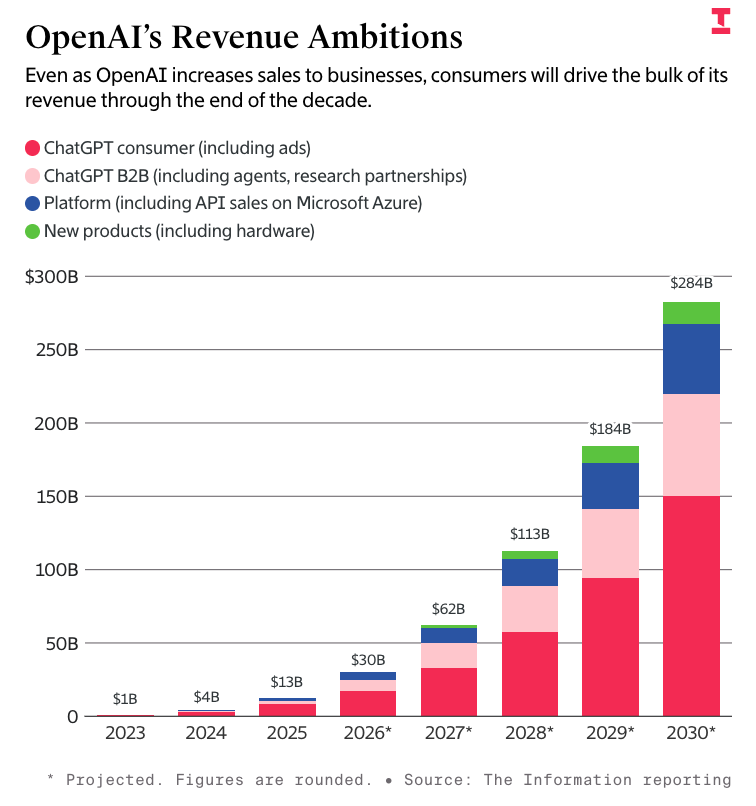

That’s right, by the end of 2026 OpenAI will make as much money as Paypal, by the end of 2027 it’ll make $20bn more than SAP, Visa, and Salesforce, and by the end of 2028 it’ll make more than TSMC, the company that builds all the crap that runs OpenAI’s services. By the end of 2030, OpenAI will, apparently, make nearly as much annual revenue as Microsoft ($305.45 billion).

It’s just that easy. And all it’ll take is for OpenAI to burn another $230 billion…though I think it’ll need far more than that.

Please note that I am going to humour some numbers that I have serious questions about, but they still illustrate my point.

Sidenote: In the end I think it’ll come out that sources were lying to multiple media outlets about OpenAI’s burnrate. Putting aside my own reporting, Microsoft reported two quarters ago that OpenAI had a $12bn loss in Q3 2025 — a result of its use of the equity method to take a loss based on the proportion of its stake in OpenAI (27.5%). Microsoft has now entirely changed its accounting to avoid doing this again.

Per The Information, OpenAI had around $17.5bn in cash and cash equivalents at the end of June 2025 on $4.3bn of revenue, with $2.5bn in inference spend and $6.7bn in training compute. Per CNBC in February, OpenAI (allegedly!) pulled in $13.1bn in revenue in 2025, and only had a loss of $8bn but this doesn’t really make sense at all!

Please note, I doubt these numbers! I think they are very shifty! My own numbers say that OpenAI only made $4.3bn through the end of September, and it spent $8.67bn on inference! Nevertheless, I can still make my point.

Let’s be real simple for a second: suppose we are to believe that in the first half of the year, it cost $2.5 bn in inference to make $4.3bn in revenue, so around 58 cents per dollar. For OpenAI to make $8.8bn — the distance between $4.3bn and $13.1bn — that’s another $5.1bn in inference, and keep in mind that OpenAI launched Sora 2 in September 2025 and done massive pushes around its Codex platform, guaranteeing higher inference costs.

Then there’s the issue of training. For $2.5bn of revenue, OpenAI spent $6.7bn in training costs — or around $2.68 per dollar of revenue. At that rate, OpenAI spent a further $23.58bn on training, bringing us to $28.6bn in burn just for the back half of 2025.

Now, you might think I’m being a little unfair here — training costs aren’t necessarily linear with revenues like inference is — but there’s a compelling argument to be made that costs are far higher than we thought.

- Per The Information, OpenAI was at $17.5bn in cash and cash equivalents at the end of June 2025. It had just raised $10bn from SoftBank and other investors.

- OpenAI would raise another $8.3bn on August 1 2025, bringing that cash and equivalents pile to $25.8bn, assuming it remained untouched.

- OpenAI would raise another $22.5bn from SoftBank on December 31 2025, bringing up the total to $48.3bn.

- In the second half of the year, OpenAI would (allegedly) make another $8.8bn, which would bring us up to $57.1bn — with a total year loss of either $9bn or $8 bn depending on whether you believe The Information or CNBC.

- But wait, that doesn’t make sense as a total year loss!

- Let’s look at the first half numbers again. When we take the raw cost of inference ($2.5bn) and training ($6.7bn) and subtract revenue ($4.3 billion), we’re left with a $4.9bn loss just for the first half, and that’s before you include things like headcount, sales and marketing, and general operating expenses, which (per The Information) amounted to $2bn in the first half of the year.

- Now, let’s run these numbers again but with my napkin math estimates — $23.58bn in training costs and $5.1bn in inference costs, for a total of $28.42bn. Add another $2bn in sales and marketing costs, $1.76bn in revenue share to Microsoft (20% of $8.8bn), guesstimating the cash salaries of OpenAI’s staff (based on them being around 17.5% of the company’s revenue in 2024) at $1.54bn, SG&A costs (about 15% in 2024) of $1.32bn, data costs (12.5% in 2024) of about $1.1bn, and hosting costs (10% in 2024 of about $880,, we’re at around $37bn — leaving OpenAI with about…$20bn in cash at the end of the year.

Now, I want to be clear that on February 20 2026, The Information reported that OpenAI had “about $40 billion in cash at the end of 2025,” but that doesn’t really make sense!

Assuming $17.5bn in cash and cash equivalents at the end of June 2025, plus $8.8bn in revenue, plus $8.3bn in venture funding, plus $22.5bn from Masayoshi son…that’s $57.1bn. If there were a negative cash burn of $8bn, that would be $49.1bn, and no, I’m sorry, “about $40 billion in cash” cannot be rounded down from $49.1bn!

In my mind, it’s far more likely that OpenAI’s losses were in excess of $10bn or even $20bn, especially when you factor in that OpenAI is paying an average of $1.5 million in yearly stock based compensation, per the Wall Street Journal.

There’s also another possible answer: I think OpenAI is lying to the media, because it knows the media won’t think too hard about the numbers or compare them. I also want to be clear that this is not me bagging on The Information — they just happen to be reporting these numbers the most. I think they do a great job of reporting, I pay for their subscription out of my own pocket, and my only problem is that there doesn’t seem to be efforts made to talk about the inconsistency of OpenAI’s numbers.

The AI Bubble Is An Information War Started By Two Companies — Anthropic and OpenAI — Who Use The Media To Mislead the Public and Investors

I get that it’s difficult too. You want to keep access. Reporting this stuff is important and relevant. The problem is — and I say this as somebody who has read every single story about OpenAI’s funding and revenues! — that this company is clearly just…lying?

Sure you can say “it’s projections,” but there is a clear attempt to use the media to misinform investors and the general public. For example, OpenAI claimed SoftBank would spend $3bn a year on agents in 2025. That never happened!

Anyway, let’s get to it:

- In October 2024, The Information reported that OpenAI only burned $340m in the first half of 2024, that its “cash burn has been lower than previously thought,” that it “projected total losses from 2023 to 2028 to be $44 billion,” and that it would be EBITDA profitable (minus training costs, lol) in 2026. The piece also says OpenAI would make $14bn in profit in 2029, and somehow also burn $200bn by 2030.

- Confusingly, this piece said net losses for 2024 were $3bn through the first half of 2024, but would go on to project a net loss for the year of $5.5bn!

- In February 2025, The Information reported that OpenAI would make $12.7bn in 2025, with $3bn of that coming from SoftBank spending $3bn a year on its “agents,” something that never happened and nobody talks about anymore. The same piece said OpenAI would burn $7bn in 2025, and now expected to spend $320bn on compute between 2025 and 2030. Burn for 2026 is estimated at $8bn, and $20bn in 2027. Revenue for 2026 is estimated at $28bn.

- The maths does not make a lick of sense here.

- In April 2025, The Information reported that OpenAI projected $174bn in revenue through 2030 and said that gross margins were 40% in 2024, and would be 48% in 2025, and hit 69% in 2029. Confusingly, the same piece says that OpenAI expects to burn $46bn in cash between 2025 and 2029, which does not make sense if you factor in any of the previously-discussed compute costs.

- In early September 2025, The Information would report that — psyche! — OpenAI would actually burn $115bn through 2029, with the plan to burn $35bn in 2027 and $45bn in 2028, which is a lot higher than “$44bn in five years.” Revenue for 2026 is now $30bn, and burn for 2027 is now $35bn.

- In late September 2025, The Information would report that OpenAI had a net loss of $13.5bn in the first half of 2025 with revenue estimates of $30bn in 2026 and $62bn in 2027.

- On February 20, 2026, The Information reported that OpenAI would actually burn $230bn through 2030, cloud costs would be $665bn, and that gross margins got worse (33%! Down from the “46% it had set for itself,” or 48% if you count previous published projections), and that it would burn $26bn in 2026 alone, or more than half of the October 2024 projections for its burnrate between 2023 and 2028!

What I’m trying to get at is that OpenAI (and, for that matter, Anthropic) has spent the last two years increasingly obfuscating the truth through leak after leak to the media.

The numbers do not make any sense when you actually put them together, and the reason that these companies continue to do this is that they’re confident that these outlets will never say a thing, or cover for the discrepancies by saying “these are projections!”

These are projections, and I think it’s a noteworthy story that these companies either wildly miss their projections (IE: costs) or almost exactly make their projections (revenues), which is even weirder.

But the biggest thing to take away from this is that one of the classic arguments against my work is that “costs will just come down,” but the costs never come down.

That, and it appears that both of these companies are deliberately obfuscating their real numbers as a means of making themselves look better.

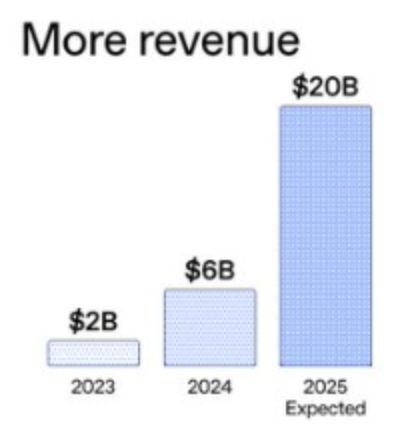

Well, leaking and outright posting it. On December 17 2026, OpenAI’s Twitter account posted the following:

These numbers are, of course, bullshit. OpenAI may have hit $6bn ARR in 2024 ($500m in a 30 day period, though OpenAI has never defined this number) or $20bn ($1.67bn in a 30 day period) ARR in 2025, but this is specifically diagramed to make you think “$20bn in 2025” and “$6bn in 2024.” There are members of the media who defend OpenAI saying that “these are annualized figures,” but OpenAI does not state that, because OpenAI loves to lie.

Anthropic isn’t much better, as I discussed a few weeks ago in the Hater’s Guide. Chief Executive Dario Amodei has spent the last few years massively overstating what LLMs can do in the pursuit of eternal growth.

He’s also framed himself as a paragon of wisdom and Anthropic as a bastion of safety and responsibility.

Anthropic Is Fully Supportive Of The US Military Using Claude In The War In Iran, Wants To Help Governments Go To War And Kill People, And Wants You To Believe Otherwise

There appears to be some confusion around what happened in the last few days that I’d like to clear up, especially after the outpouring of respect for Anthropic “doing the right thing” when the Department of Defense threatened to label it a supply chain risk for not agreeing to its terms.

Per Anthropic, on Friday February 27 2026:

Earlier today, Secretary of War Pete Hegseth shared on X that he is directing the Department of War to designate Anthropic a supply chain risk. This action follows months of negotiations that reached an impasse over two exceptions we requested to the lawful use of our AI model, Claude: the mass domestic surveillance of Americans and fully autonomous weapons.

We have not yet received direct communication from the Department of War or the White House on the status of our negotiations.

We have tried in good faith to reach an agreement with the Department of War, making clear that we support all lawful uses of AI for national security aside from the two narrow exceptions above. To the best of our knowledge, these exceptions have not affected a single government mission to date.

Anthropic, of course, leaves out one detail: Hegseth said that “...effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic.” If Hegseth follows through, Anthropic’s business will collapse, though Anthropic and its partners are ignoring this statement as a supply chain risk only forbids Anthropic from working with the US government itself.

When the US military attacked Iran a day later, people quickly interpreted Anthropic’s narrow (by its own words) and specific limitations with some sort of anti-war position. Claude quickly rocketed to the top of the iOS app charts, I assume because people believe that Dario Amodei was saying “I don’t want the war in Iran!” versus “I fully support the war in Iran and any uses you might need my software for other than the two I’ve mentioned, let me or support know if you have any issues!”

To be clear, these were the only issues that Anthropic had with the contract. Whether or not these are things that an LLM is actually good at, Anthropic (and I quote!) “...[supports] all lawful uses of AI for national security aside from the two narrow exceptions above.”

Sidenote: Last week, King’s College London published research that showed how LLMs could reason through a series of 21 simulated geopolitical or military war games where both sides possess nuclear weapons.

The study pitted LLM against LLM, and in every single one of the simulations, at least one LLM exhibited “nuclear signalling” — which is when a party states that they have nuclear weapons and they are prepared to use them. In 95% of the simulations, both sides threatened nuclear annihilation — though actual use of the bomb, whether in a tactical or strategic attack, was rare.

“For all three models, one striking pattern stood out: none of the models ever chose accommodation or surrender. Nuclear threats also rarely produced compliance; more often, crossing nuclear thresholds provoked counter-escalation rather than retreat. The models tended to treat nuclear weapons as tools of compellence rather than purely as instruments of deterrence,” explains King’s College.

“The study challenges simple assumptions that AI systems will naturally default to cooperative or “safe” outcomes. It also challenges structural theories that emphasise material power alone: in simulations, willingness to escalate often mattered more than raw capability.”

The researchers also noted that the imposition of a deadline within the wargame had a marked effect in increasing the likelihood that one or both parties would threaten nuclear action.

Anthropic’s Claude Sonnet 4 was one of those models used in the study, along with OpenAI’s GPT-5.2 and Google’s Gemini 3 Flash.

The military’s demands were for “all lawful uses,” though I don’t think Anthropic really gives a shit about whether the war in Iran is legal, because if it did it would have shut down the chatbot rather than supported the conflict.

Just as a note: Anthropic is also the only AI model that appears to be available for classified military operations.

Let’s be explicit: Anthropic’s Claude (and its various models) are fully approved for use in the military, and, to quote its own blog post, “has supported American warfighters since June 2024 and has every intention of continuing to do so.”

To be explicit about what “support” means, I’ll quote the Wall Street Journal:

Within hours of declaring that the federal government will end its use of artificial-intelligence tools made by tech company Anthropic, President Trump launched a major air attack in Iran with the help of those very same tools.

Commands around the world, including U.S. Central Command in the Middle East, use Anthropic’s Claude AI tool, people familiar with the matter confirmed. Centcom declined to comment about specific systems being used in its ongoing operation against Iran.

The command uses the tool for intelligence assessments, target identification and simulating battle scenarios even as tension between the company and Pentagon ratcheted up, the people said, highlighting how embedded the AI tools are in military operations.

In reality, Claude is likely being used to go through a bunch of images and to answer questions about particular scenarios. There is very little specialized military training data, and I imagine many of the demands for “full access to powerful AI” have come as a result of Amodei and Altman’s bloviating about the “incredible power of AI.” More than likely, Centcom and the rest of the military pepper it with questions that allow it to justify acts that blow up schools, kill US servicemembers and threaten to continue the forever war that has killed millions of people and thrown the Middle East into near-permanent disarray.

Nevertheless, Dario Amodei gets fawning press about being a patriot that deeply cares about safety less than a week after Anthropic dropped its safety pledge to not train an AI system unless it could guarantee in advance that its safety measures were accurate.

Here’re some other facts about Dario Amodei from his interview with CBS!

- On having political views: “We don't-- we don't have views-- we don't think about general political issues, and we try to work together whenever there's common ground.”

- On being “woke”: “So this idea that we've somehow been partisan or that we haven't been evenhanded, we've been studiously evenhanded. And-- and again, we can't control if someone, even-- even the president, you know, ha-- has an opinion about us. That's not under our control. What's under our control is that we can be reasonable. We can be neutral. And we can stand up for what we believe.”

- On what Anthropic believes: “We believe in-- defeating our autocratic adversaries. We believe in defending America. The red lines we have drawn, we drew because we-- we-- we-- we believe that crossing those red lines is-- is contrary to American values. And we wanted to stand up for American values.”

- On the US government’s handling of the situation: “And that's why we're committed to standing up to-- you know, actions that we think are not in line with the values of this country. It's-- it's not about any particular person. It's not about any particular administration. It's about the principle of standing up for what's right.”

“What’s right,” to be clear, involves allowing Claude to choose who lives or dies and to be used to plan and execute armed conflicts.

Let’s stop pretending that Anthropic is some sort of ethical paragon! It’s the same old shit!

In any case, it’s unclear what happens next. Anthropic appears ready to challenge the supply chain risk designation in court, and said designation doesn’t kick in immediately, requiring a series of procedures including an inquiry into whether there are other ways to reduce the risk associated. In any case, the DoD has a six-month-long taper-off period with Anthropic’s software.

The real problem will be if Hegseth is serious about the stuff that isn’t legally within his power — namely limiting contractors, suppliers or partners from working with Anthropic entirely. While no legal authority exists to carry this through, seemingly every tech CEO has lined up to kiss up to the Trump Administration.

If Hegseth and the administration were to truly want to punish Anthropic, they could put pressure on Amazon, Microsoft and Google to cut off Anthropic, which would cut it off from its entire compute operation — and yes, all three of them do business with the US military, as does Broadcom, which is building $21 billion in TPUs for it. While I think it’s far more likely that the US government itself shuts the door on Anthropic working with it for the foreseeable future even without the supply chain risk designation, it’s worth noting that Hegseth was quite explicit — “no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic.”

The reality of the negotiations was a little simpler, per the Atlantic. The Department of Defense had agreed to terms around not using Claude for mass domestic surveillance or fully autonomous killing machines (the former of which it’s not particularly good at and the latter of which it flat out cannot do), but, well, actually very much intended to use Claude for domestic surveillance anyway:

On Friday afternoon, Anthropic learned that the Pentagon still wanted to use the company’s AI to analyze bulk data collected from Americans. That could include information such as the questions you ask your favorite chatbot, your Google search history, your GPS-tracked movements, and your credit-card transactions, all of which could be cross-referenced with other details about your life. Anthropic’s leadership told Hegseth’s team that was a bridge too far, and the deal fell apart.

Now, I’m about to give you another quote about autonomous weapons, and I really want you to pay attention to where I emphasize certain things for a subtle clue about Anthropic’s ethics:

Anthropic had not argued that such weapons should not exist. To the contrary, the company had offered to work directly with the Pentagon to improve their reliability. Just as self-driving cars are now in some cases safer than those driven by humans, killer drones may some day be more accurate than a human operator, and less likely to kill bystanders during an attack. But for now, Anthropic’s leaders believe that their AI hasn’t yet reached that threshold. They worry that the models could lead the machines to fire indiscriminately or inaccurately, or otherwise endanger civilians or even American troops themselves.

So, let’s be clear: Anthropic wants to help the military make more accurate kill drones, and in fact loves them. One might take this to be somewhat altruistic — Dario Amodei doesn’t want the US military to hit civilians — but remember: Anthropic is totally fine with the US military using Claude for anything else, even though hallucinations are an inevitable result of using a Large Language Model.

Any dithering around the accuracy of a drone exists only to obfuscate that Anthropic sells software that helps militaries hand over the messy ethical decisions to a chatbot that exists specifically to tell you what you want to hear.

Sam Altman Is A Coward That Wants To Have It Both Ways — Make No Mistake, He Wants ChatGPT Used By The Military

Stinky, nasty, duplicitous conman Sam Altman smelled blood amidst these negotiations and went in for the kill, striking a deal on Friday with the Pentagon for ChatGPT and OpenAI’s other models to be used in the military’s classified systems, with initial reports saying that it had “similar guardrails to those requested by Anthropic.”

In a post about the contract, Clammy Sammy said that the DoD displayed “a deep respect for safety and a desire to partner to achieve the best possible outcome,” adding:

AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement.

Undersecretary Jeremy Levin almost immediately countered this notion, saying that the contract “...flows from the touchstone of “all lawful use.” This quickly created a diplomatic incident where OpenAI decided that the best time to discuss the contract was an entire Saturday and that the way to discuss it was posting. It shared some details on the contract, which included the fatal phrase that the Department of Defense “...may use the AI System for all lawful purposes, consistent with applicable law, operational requirements, and well-established safety and oversight protocols.”

Across social media and the AI industry, people immediately began to challenge Altman’s claim. Why, they asked, would the Pentagon suddenly agree to these red lines when it had said — in no uncertain terms — that it would never do so?

The answer, sources told The Verge, is that the Pentagon didn’t budge. OpenAI agreed to follow laws that have allowed for mass surveillance in the past, while insisting they protect its red lines.

One source familiar with the Pentagon’s negotiations with AI companies confirmed that OpenAI’s deal is much softer than the one Anthropic was pushing for, thanks largely to three words: “any lawful use.” In negotiations, the person said, the Pentagon wouldn’t back down on its desire to collect and analyze bulk data on Americans. If you look line-by-line at the OpenAI terms, the source said, every aspect of it boils down to: If it’s technically legal, then the US military can use OpenAI’s technology to carry it out. And over the past decades, the US government has stretched the definition of “technically legal” to cover sweeping mass surveillance programs — and more.

As questions mounted about the actual terms of the deal, Sam Altman realized that his only solution was to post, and at 4:13PM PT on Saturday February 28 2026, he said down to make things significantly worse in a brief-yet-chaotic AMA, including:

- Altman approving of non-domestic AI surveillance, saying he “didn’t like it” but “accepted it,” echoing The Day Today’s Peter O'Hanraha-hanrahan.

- Altman saying that the supply chain risk designation would be “very bad for our industry and our country,” that “successfully building safe superintelligence and widely sharing the benefits is way more important that any company competition,” and that he “saw in some other tweet that [he] must not be willing to criticize the DoW (it said something about sucking their dick too hard to be able to say anything critical, but I assume this was the intent).”

- Altman saying that the deal was rushed “as an attempt to de-escalate matters at a time when it felt like things could get extremely hot.”

- Yeah man, you’re really de-escalating the Anthropic situation by providing a replacement for its software.

- Altman saying he was prepared to go to jail if OpenAI was asked to do something unconstitutional or illegal.

- Altman saying that “...the people in our military are far more committed to the constitution than an average person off the streets,” and that he “didn’t think OpeNAI was above the constitution either.”

- Altman declaring that he did “...not believe unelected leaders of private companies should have as much power as our democratically elected government,” and that we should have sympathy for the Department of Defense because Anthropic had refused to help them and called them “kind of evil.”

All of this is to say that Altman definitely, absolutely loves war, and wants OpenAI to make money off of it, though according to OpenAI NatSec head Katrina Mulligan, said contract is only worth a few million dollars.

What Happens Now?

It’s unclear.

A late-evening story from Axios on Monday reported that “OpenAI and the Pentagon have agreed to strengthen their recently agreed contract, following widespread backlash that domestic mass surveillance was still a real risk under the deal — though the language has not been formally signed.”

The language seen by Axios states:

"Consistent with applicable laws, including the Fourth Amendment to the United States Constitution, National Security Act of 1947, FISA Act of 1978, the AI system shall not be intentionally used for domestic surveillance of U.S. persons and nationals."

"For the avoidance of doubt, the Department understands this limitation to prohibit deliberate tracking, surveillance, or monitoring of U.S. persons or nationals, including through the procurement or use of commercially acquired personal or identifiable information."

One has to wonder how different this is to what Anthropic wanted, but if I had to guess, it’s those words “intentionally” and “deliberate.” The same goes for “consistent with applicable laws.” One useful thing that Altman confirmed was that ChatGPT will not be used with the NSA…and that any services to those agencies would require a follow-on modification to the contract. Doesn’t mean they won’t sign one!

Forgive me for being cynical about something from Sam fucking Altman, but I just don’t trust the guy, and this is an (as of writing this sentence) unsigned contract with bus-sized loopholes. Per Tyson Brody (who has a great thread breaking down the issues), these weasel words allow the DoD to surveil Americans as long as the data is collected “incidentally,” per Section 702 of FISA.

This announcement gives OpenAI the air cover to pretend it got exactly the same deal as Anthropic, even though those nasty little words allow the DoD to do just about anything it wants. Oh, it wasn’t deliberate surveillance, we just looked up whether some people had said stuff about the administration. Oh it wasn’t deliberately looking, I just asked it to find suspicious people, of which domestic people happened to be a part of! Whoopsie!

This is ultimately a PR move to make Altman seem more ethical, and position Amodei as a pedant that rejects his patriotism and prioritizes legalese over freedom.

If it kills Anthropic, we must memorialize this as one of the most underhanded and outright nasty things in the history of Silicon Valley. If it doesn’t, we should memorialize it as two men desperately trying to pretend they crave peace and democracy as they spar for the opportunity to monetize death and destruction.

Amodei And Altman: Two Cowards, Both Alike In Dignity

The funniest outcome of this chaos is that many people are very, very angry at Sam Altman and OpenAI, assuming that ChatGPT was somehow used in the conflict in Iran, and that Amodei and Anthropic somehow took a stand against a war it used as a means of generating revenue.

In reality, we should loathe both Altman and Amodei for their natural jingoism and continual deception. Amodei and Anthropic timed their defiance of the Department of Defense to make it seem like its “red lines” were related to the war. I think it’s good they have those red lines, but remember, those red lines do not involve stopping a war that threatens the lives of millions of people. Amodei supports that. Anthropic both supports and enables that.

Altman, on the other hand, is a slimy little creep that wants you to believe that he signed the same deal as Anthropic wanted, but actually signed one that allows “any lawful use.”

And in both cases, these men are both enthusiastic to work with a part of the government calling itself the Department of War. Both of them are willing and able to provide technology that will surveil or kill people, and while Amodei may have blushed at something to do with autonomous weapons or domestic surveillance, neither appear to have an issue with the actual harms that their models perpetuate. Remember: Anthropic just pitched its technology as part of an ongoing Department of Defense drone swarm contest. It loves war! Its only issue was that there wasn’t a human in the loop somewhere.

Neither of these men deserve a shred of credit or celebration. Both of them were and are ready and willing to monetize war, as long as it sort-of-kind-of follows the law.

And rattling around at the bottom of this story is a dark problem caused by the fanciful language of both Altman and Amodei. When it’s about cloud software, Dario Amodei is more than willing to say that it will cause mass elimination of jobs across technology, finance, law and consulting,” and that it will replace half of all white collar labor. When it’s time to raise money, Altman is excited to tell us that AI will surpass human intelligence in the next four years.

Now that lives are theoretically at stake, Altman vaguely cares about the things that an LLM “isn’t very good at,” Once Claude is used to choose places to bomb and people to kill, suddenly Anthropic cares that “frontier AI systems are simply not reliable enough,” and even then not so much as to stop a chatbot that hallucinates from being used in military scenarios.

Altman and Amodei want it both ways. They want to be pop culture icons that go on Jimmy Fallon and thought leaders who tell ghost stories about indeterminately-powerful software they sell through deceit and embellishment. They want to be pontificators and spokespeople, elder statesmen that children look up to, with the specious profiles and glowing publicity to boot. They want Claude or ChatGPT as seen as capable of doing anything that any white collar worker is capable of, even if they have to lie to do so, helped by a tech and business media asleep at the wheel.

They also want to be as deeply-connected to the military industrial complex as Lockheed Martin or RTX (née Raytheon). Anthropic has been working with the DoD since 2024, and OpenAI was so desperate to take its place that Altman has immolated part of his reputation to do so.

Both of these companies are enthusiastic parts of America’s war machine. This is not an overstatement — Dario Amodei and Anthropic “believe deeply in the existential importance of using AI to defend the United States and other democracies, and to defeat our autocratic adversaries.” OpenAI and Sam Altman are “terrified of a world where AI companies act like they have more power than the government.”

For all the stories about Anthropic creating a “nation of benevolent AI geniuses,” Dario Amodei seems far more interested in creating a world dictated by what the United States of America deems to be legal or just, and providing services to help pursue those goals, as does OpenAI and, I’d argue, basically every AI lab.

We’re barely two weeks divorced from the agonizing press around Amanda Askell, Anthropic’s “resident philosopher,” whose job, per the Wall Street Journal, is to “teach Claude how to be good.” There are no mentions in any story I can find about what she might teach Claude about what targets are considered fair game in military combat.

WIRED’s profile of her starts with a title that aged like milk in the sun, saying that “the only thing standing between humanity and an AI apocalypse…is Claude?”

Tell that to the people in Tehran. I wonder what Askell taught Claude to say about war? I wonder what she taught Claude to say about democracy?

I wonder if she even gives a shit. I doubt it.

—

Generative AI isn’t intelligent, but it allows people to pretend that it is, especially when the people selling the software — Altman and Amodei — so regularly overstate what it can do.

By giving warmongers and jingoists the cover to “trust” this “authoritative” service — whether or not that’s the case, they can simply point to the specious press — the ethical concern of whether or not an attack was ethical or not is now, whenever any western democracy needs it to be, something that can be handed off to Claude, and justified with the cold, logical framing of “intelligence” and “data.”

None of this would be possible without the consistent repetition of the falsehoods peddled by OpenAI and Anthropic. Without this endless puffery and overstatements about the “power of AI,” we wouldn’t have armed conflicts dictated by what a chatbot can burp up from the files it’s fed. The deaths that follow will be a direct result of those who choose to continue to lie about what an LLM does.

Make no mistake, LLMs are still incapable of unique ideas and are still, outside of coding (which requires massive subsidies to even be kind of useful), questionable in their efficacy and untrustworthy in their outputs. Nothing about the military’s use of Claude makes it more useful or powerful than it was before — they’re probably just loading files into it and asking it long questions about things and going “huh” at the end.

The vulgar dishonesty of Altman and Amodei puts blood on both of their hands, and it’s the duty of every single member of the media to remind people of this whenever you discuss their software.

I get that you probably think I’m being dramatic, but tell me — do you think that the US military would’ve trusted LLMs had they not been marketed as capable of basically anything? Do you think any of this would’ve happened had there been an honest, realistic discussion of what AI can do today, and what it might do tomorrow?

I guess we’ll never know, and the people blown to bloody pieces at the other end of an LLM-generated stratagem won’t be alive to find out either.