Hi! If you like this piece and want to support my independent reporting and analysis, why not subscribe to my premium newsletter? It’s $70 a year, or $7 a month, and in return you get a weekly newsletter that’s usually anywhere from 5,000 to 18,000 words, including vast, detailed analyses of NVIDIA, Anthropic and OpenAI’s finances, and the AI bubble writ large. I just put out a massive Hater’s Guide To The SaaSpocalypse, as well as last week’s deep dive into how the majority of data centers aren’t getting built and the overall AI industry is depressingly small. Supporting my premium supports my free newsletter, and premium subscribers don't get this ad.

Soundtrack: Metallica — …And Justice For All

Bear with me, readers. I need to do a little historical foreshadowing to fully explain what’s going on.

In the run-up to the great financial crisis, unscrupulous lenders issued around 1.9 million subprime loans, with many of them being adjustable rate mortgages (ARMs) with variable rates that, after a two-or-three-year-long introductory period, would adjust every twelve months, per CBS News in July 2006:

On a $200,000 ARM that began a few years ago, the initial rate was around 4.5 percent. When the ARM adjusts to 6.5 percent, the monthly payment will increase from $1,013 to $1,254, or a rise of almost 24 percent.

Although interest rates have increased more than 4 percentage points since 2004, most ARMs typically cap the amount of the annual rate increase to 2.5 percentage points per year. Therefore, these increases are only just the beginning and it's very likely that the people experiencing an increased ARM payment this year will see a similar rise again in 12 months.

At the time, 18% of homeowners had adjustable-rate mortgages, which also made up more than 25% of new mortgages in the first quarter of 2006, with (at the time) over $330 billion of mortgages expected to adjust upwards. Things were grimmer beneath the surface. A question on JustAnswer from 2009 showed a homeowner that was about to lose their house after being conned into a negative amortization loan — a mortgage where payments didn’t actually cover the interest, meaning that each month the balance increased. Dodgy lenders were given bonuses for selling more mortgages, whether or not the person on the other end was capable of paying, and by November 2007, around two million homeowners held $600 billion of ARMs.

Yet the myth of the subprime mortgage crisis was that it was caused entirely by low income borrowers. Per Duke’s Manuel Adelino:

We found there was no explosion of credit offered to lower-income borrowers. In fact, home ownership rates among the poorest 20 percent of Americans fell during the boom because those buyers were being priced out of the market. Instead, we found credit was expanded across the board. Everybody was playing the same game. But credit expanded most drastically in areas where house prices were rising the most, and these were markets that were beyond the reach of lower-income borrowers.

The overwhelming majority of mortgages were going to middle income and relatively high income households during the boom, just as they have always done.

Despite The Big Short’s dramatic “stripper with six properties” scene made for a vivid demonstration of the subprime problem, the reality was that everybody got taken in by teaser rate mortgages, driving up the value of properties based on a housing market that was only made possible by mortgages that were expressly built to hide the real costs as interest rates and borrower payments rose every six to 36months. I’ll add that near-prime mortgages — for borrowers with just-below-prime credit scores — were also growing, with over 1.1 million of them in 2005, when they represented nearly 32% of all loans.

Many people who bought houses that they couldn’t afford did so based on a poor understanding of the terms of their mortgage, thinking that the value of housing would continue to climb as it had for over a hundred years, and/or the belief that they’d easily be able to refinance the loans. Even as things deteriorated toward the middle of the 2000s, people came up with rationalizations as to why things would work out, such as Anthony Downs of The Brookings Institution, who in October 2007 said the following in a piece called “Credit Crisis: The Sky is not Falling”:

U.S. stock markets are gyrating on news of an apparent credit crunch generated by defaults among subprime home mortgage loans. Such frenzy has spurred Wall Street to cry capital crisis. However, there is no shortage of capital – only a shortage of confidence in some of the instruments Wall Street has invented. Much financial capital is still out there looking for a home.

As this brief describes, the facts hardly indicate a credit crisis. As of mid-2007, data show that prices of existing homes are not collapsing. Despite large declines in new home production and existing home sales, home prices are only slightly falling overall but are still rising in many markets. Default rates are rising on subprime mortgages, but these mortgages—which offer loans to borrowers with poor credit at higher interest rates—form a relatively small part of all mortgage originations. About 87 percent of residential mortgages are not subprime loans, according to the Mortgage Bankers Association’s delinquency studies.

Brookings also added that “...the vast majority of subprime mortgages are likely to remain fully paid up as long as unemployment remains as low as it is now in the U.S. economy.” At the time, US unemployment was 4.7%, but a year later it was at 6.5%, and would peak at 10% in October 2009.

In an article from the December 2004 issue of Economic Policy Review, Jonathan McCarthy and Richard W. Peach argued that there was “little basis” for concerns about housing prices, with “home prices essentially moving in line with increases in family income and declines in nominal mortgage interest rates,” and hand-waved any concerns based on vague statements about “demand”:

Our main conclusion is that the most widely cited evidence of a bubble is not persuasive because it fails to account for developments in the housing market over the past decade. In particular, significant declines in nominal mortgage interest rates and demographic forces have supported housing demand, home construction, and home values during this period. Taking these factors into account, we argue that market fundamentals are sufficiently strong to explain the recent path of home prices and support our view that a bubble does not exist.

As for the likelihood of a severe drop in home prices, our examination of historical national home prices finds no basis for concern. Even during periods of recession and high nominal interest rates, aggregate real home prices declined only moderately.

From the outside, this made it appear that the value of housing was exponential, and that the “pent-up demand” for homes necessitated a massive boom in construction, one that peaked in January 2006 with 2.27 million new homes built. A year later, this number collapsed to 1.084 million, and in January 2009, only 490,000 new homes had been built in America, the lowest it had been in history.

Denial rates for mortgages declined drastically (along with the increase in things like 40-year or 50-year mortgages), which meant that suddenly anybody was able to get a house, which made it only seem logical to build more housing. Low interest rates before 2006 allowed consumers to take on mountains of new credit card debt, rising to as high as 20% of household incomes in 2007, to the point that by the 2000s, credit card companies were making more money from credit card lending than the fees from people using the credit cards, with $65 billion of the $95 billion of the credit card industry’s revenue coming from interest on debt, with lending-related penalty fees and cash advance fees contributing another $12.4 billion, per Philadelphia Fed Economist Lukasz Drozd.

While the precise order of events is a little more complex, the general gist of the subprime mortgage crisis was straightforward: easily-available money allowed massive amounts of people — many of whom couldn’t afford to buy these houses outside of the easy money that funded the bubble — to enter the housing market, which in turn made it much easier to sell a house for a much higher price, which inflated the value of housing.

People made decisions based on fundamentally-flawed information. In January 2004, the Bush administration declared that America’s economy was on the path to recovery, with small businesses creating the majority of new jobs and the stock market booming. Debt was readily-available across the board, with commercial and industrial loans spiking along with consumer debt (including a worrying growth in subprime auto loans). The good times were rolling, as long as you didn’t think about it too hard.

But, as I said, the chain of events was simple: it was easy to borrow money to buy a house, which meant lots of people were buying houses, which meant that the value of a house seemed higher than it was outside of the easy money era. Easily-available money put lots of cash into the economy, which led to higher prices, which led to inflation, which forced the federal reserve to raise interest rates 17 times in the space of two years, which made it harder to get any kind of loan, which made it harder to get a mortgage, which made it harder to sell a house, which made people sell houses for cheaper, which lowered the value of houses, which made it harder to refinance the bad loans, which meant people foreclosed on their homes, which in turn lowered the value of housing, all as demand for housing dropped because nobody was able to buy housing.

The underlying problems were, ultimately, the illusion of value and mobility. Those borrowing at the time believed they had invested in something with a consistent (and consistently-growing) value — a house — and would always have easy access to credit (via credit cards and loans), as before-tax family income had never been higher. In the beginning of 2007, delinquencies on consumer and business loans climbed, abandoned housing developments grew, and a US economy dependent on the housing bubble (per Paul Krugman’s “That Hissing Sound” from August 2005) began to stumble. By November 2009, 23% of US consumer mortgages were underwater (meaning they were worth less than their loans).

The housing bubble was created through easily-available debt, insane valuations based on debt-fueled speculation, do-nothing regulators (like eventual Fed Chair Ben Bernanke, who said in October 2005 that there was no housing bubble) and consumers being sold an impossible, unsustainable dream by people financially incentivized to make them rationalize the irrational, and believe that nothing bad will ever happen.

In February 2005, 40% ($19 billion) of IndyMac Bancorp’s mortgage originations in a single quarter came from a “Pay-Option ARM,” which started with a 1% teaser rate which jumped in a few short months to 4% or more, with frequent adjustments. Washington Mutual CEO Kerry Killinger said in 2003 that he wanted WaMu to be “the Wal-Mart of banking,” and did so by using (to quote the New York Times) “relaxed standards,” including issuing a mortgage to a mariachi singer who claimed a six-figure income and verified it using a single photo of himself.

By the time it collapsed in September 2008, WaMu had over $52.9 billion in ARMs and $16.05 billion in subprime mortgage loans.

Had Washington Mutual and the many banks making dodgy ARM and subprime loans underwritten loans based on the actual creditworthiness of their applicants, there wouldn’t have been a housing bubble, because many of these borrowers would’ve been unable to pay their mortgages, and thus wouldn’t have been deemed creditworthy, and thus no apparent housing demand would’ve grown.

In very simple terms, the “demand” for housing was inflated by a deceitfully-priced product that undersold its actual costs, and through that deceit millions of people were misled into believing said product was viable.

Did you work out where this is going yet?

The Subprime AI Crisis Begins

In September 2024, I raised my first concerns about a Subprime AI Crisis:

I hypothesize a kind of subprime AI crisis is brewing, where almost the entire tech industry has bought in on a technology sold at a vastly-discounted rate, heavily-centralized and subsidized by big tech. At some point, the incredible, toxic burn-rate of generative AI is going to catch up with them, which in turn will lead to price increases, or companies releasing new products and features with wildly onerous rates — like the egregious $2-a-conversation rate for Salesforce’s “Agentforce” product — that will make even stalwart enterprise customers with budget to burn unable to justify the expense.

This theory is important, and thus I’m going to give it a lot of time and love to break it down.

That starts with the parties involved, and how the economics involved get worse over time, returning to my theory of “AI’s chain of pain, and the hierarchy of how the actual AI economy works.

How A Dollar Moves Through The AI Industry, And How Consumers Are Misled With Subsidies

The AI industry has done a great job in obfuscating exactly how brittle its economics really are, and as a result, I need to explain both how money is raised, money is deployed, and where the economics begin to break down.

The Funders

Generally, AI is funded from only a few places::

- Data centers raise debt from either banks, private credit, private equity or “business development companies,” non-banking entities that borrow money from banks to lend to risky companies.

- In an analysis of 26 prominent data center deals, I found (back in December 2025) several names — Blue Owl, MUFG (Mitsubishi), Goldman Sachs, JP Morgan Chase, Morgan Stanley, SMBC (Sumitomo Mitsui) and Deutsche Bank — that come up regularly.

- AI Labs (and AI startups) raise funding from venture capitalists (EG: Dragoneer (Anthropic, OpenAI, Perplexity) and Founders Fund (Anthropic, OpenAI)), hyperscalers (Google, Amazon, NVIDIA, Microsoft, all of whom have now invested in both OpenAI and Anthropic), sovereign wealth funds (GIC, Singapore’s sovereign wealth fund, invested in Anthropic), and even banks providing lines of credit, as they did for both Anthropic and OpenAI.

- Many of the big names in data center development (who I believe have all, in some way, backed CoreWeave) funded those lines of credit, including Morgan Stanley, SMBC, JPMorgan and MUFG.

Some things to keep note of:

- Those common names are points of failure, in particular SMBC and MUFG, two large Japanese banks that have aggressively loaned to just about every part of the AI economy. This pairs badly with the fact that the Japanese government is considering interest rate hikes thanks to the continuing chaos in the Middle East, which will make debt more expensive.

- Venture capitalists are funded by limited partners (EG: pension funds, investment banks and wealthy individuals), and the venture capital industry is facing an historic liquidity crisis (IE: they can’t raise money and their investments aren’t selling), which means that it cannot sustain the AI industry forever.

The AI Industry, And The Mostly-Subsidized AI Economy

- NVIDIA (and other hardware sellers to a much lesser extent) sells GPUs and the associated hardware to data center developers and hyperscalers.

- At around $42 million a megawatt between GPUs, data center and power construction, these data centers are almost entirely paid using debt.

- This is the only link in the chain that is really profitable.

- Data center developers rent their GPUs to AI labs and hyperscalers. Developers, who raised $178.5 billion in debt in the US alone last year, must borrow heavily to fund buildouts, and due to many of these projects being run by either brand or relatively new developers, debt costs are higher.

- As a result, based on my premium data center model, many data center projects are unprofitable even with a paying customer, and that’s assuming they even get built.

- To make matters worse, as I discussed last week, only 5GW of data center capacity out of over 200GW announced is actually under construction globally, which means many of these loans are currently on interest-only payments.

- All evidence points to GPU compute either being a low or negative-margin business. CoreWeave — the largest, best-funded and NVIDIA-backed AI compute provider — had an operating margin of -6% and net loss margin of -29% in 2025.

- CoreWeave’s largest customers are Microsoft, OpenAI and NVIDIA, which means that it should, in theory, be getting the best rate around.

- Hyperscalers like Google, Meta, Amazon, and Microsoft, who both rent GPUs from data center providers and rent GPUs to AI labs (as well as offering API access to some AI labs’ models — Google and Amazon sell Anthropic’s, Microsoft sells OpenAI’s models, and both it’s own models and other models like xAI’s Grok).

- Hyperscalers steadfastly refuse to talk about their AI revenues, and do not break out costs.

- I would also put Oracle in this bucket.

- AI labs rent GPUs from either hyperscalers or data center companies to either train models or run inference (creating the outputs of models), sell access to models via their API, and offer subscription services to both consumer and business customers.

- Important detail: in almost every case, an AI lab must make an up front commitment, likely with a prepayment, to secure future capacity. This means that AI labs are often having to pony up massive amounts of up-front capital on top of their incredibly high ongoing costs.

- Anthropic has made $5 billion in revenue and spent $10 billion on compute to date, and had to raise another $30 billion in February 2026 after raising about $16.5 billion in 2025 alone.

- Through September 2025, OpenAI made $4.3 billion in revenue and spent $8.67 billion on inference alone.

- Neither of these companies have a path to profitability.

- AI startups buy access to models via AI labs’ API, building services that have “AI features” powered by said models, paid on a per-million token basis (for input tokens (user-fed data) and output tokens (model outputs)).

- Every single AI startup is unprofitable, and every AI startup functions by offering a service powered by AI models provided by AI labs. In every case that I’ve found, these providers always offer far more in token burn than the cost of their subscriptions.

- Consumers and businesses pay for monthly subscriptions or, in some cases, API access to models.

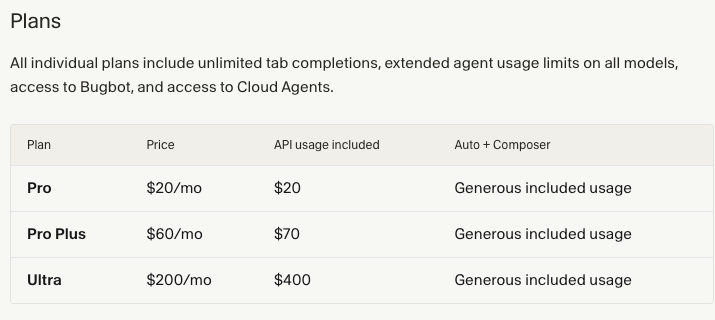

- Customers paying for AI services in most cases pay for a monthly service, such as Anthropic’s Claude Pro or Max or Perplexity Pro/Max, running from $20 a month to $200 a month.

- These subscriptions for the most part mask the amount of tokens that you are actually burning as a customer, but in every single case that I’ve found, that amount is always in excess of the subscription cost.

- Customers paying for AI services in most cases pay for a monthly service, such as Anthropic’s Claude Pro or Max or Perplexity Pro/Max, running from $20 a month to $200 a month.

What Does “Subsidized AI” Mean?

This is a crucial point, so stay with me.

AI models work by charging a per-million token rate for inputs (things you feed in) and outputs, which are either the things that the model outputs (such as an image, text or code), or the “chain of thought reasoning” many models rely upon now, where they take an input, generate a plan (which is an “output”) and then do stuff based on said plan.

AI startups, for the most part, do not have their own models, and thus must pay OpenAI or Anthropic (or other providers to a much lesser extent) to build services using them.

When you pay for access to an AI startup’s service — which, of course, includes OpenAI and Anthropic — you do so for a monthly fee, such as $20, $100 or $200-a-month in the case of Anthropic’s Claude, Perplexity’s $20 or $200-a-month plan, or OpenAI’s $8, $20, or $200-a-month subscriptions. In some enterprise use cases, you’re given “credits” for certain units of work, such as how Lovable allows users “100 monthly credits” in its $25-a-month subscription, as well as $25 (until the end of Q1 2026) of cloud hosting, with rollovers of credits between months.

When you use these services, the company in question then pays for access to the AI models in question, either at a per-million-token rate to an AI lab, or (in the case of Anthropic and OpenAI) whatever cloud provider is renting them the GPUs to run the models. A token is basically ¾ of a word.

As a user, you do not experience token burn, just the process of inputs and outputs. AI labs obfuscate the cost of services by using “tokens” or “messages” or 5-hour-rate limits with percentage gauges, and you, as the user, do not really know how much any of it costs. On the back end, AI startups are annihilating cash, with up until recently Anthropic allowing you to burn upwards of $8 in compute for every dollar of your subscription. OpenAI allows you to do the same, though it’s hard to gauge by how much.

This is where the economic problem has begun. When the AI bubble started, venture capitalists flooded AI startups with cash, encouraging them to create hypergrowth businesses using, for the most part, monthly subscription costs that didn’t come close to covering the costs.

As a result, many AI companies have experienced rapid growth selling a product that can only exist with infinite resources.

The problem is fairly simple: providing AI services is very expensive, and costs can vary wildly depending on the customer, input and output, the latter of which can change dramatically depending on the prompt and the model itself. A coding model relies heavily on chain-of-thought reasoning, which means that despite the cost of tokens coming down (which does not mean the price of providing them has decreased, it’s a marketing move), models are using far, far more tokens, increasing costs across the board.

And consumers crave new models. They demand them. A service that doesn’t provide access to a new model cannot compete with those that do, and because the costs of models have been mostly hidden from users, the expectation is always the newest models provided at the same price.

As a result, there really isn’t any way that these services make sense at a monthly rate, and every single AI company loses incredible amounts of money, all while failing to make that much revenue in the first place.

For example, Harvey is an AI tool for lawyers that just raised $200 million at an $11 billion valuation, all while having an astonishingly small $190 million in ARR, or $15.8 million a month. It raised another $160 million in December 2025, after raising $300 million in June 2025, after raising $300 million in February 2025.

Cursor is an AI coding tool that raised $160 million in 2024 (As of December 2024, it had $48 million ARR, or around $4 million of monthly revenue), $900 million ($500 million ARR/$41.6 million) in June 2025, and $2.3 billion in November 2025 ($1 billion ARR/$83 million). As of March 2, 2026, Cursor was at $2 billion annualized revenue, or $166 million in monthly revenue.

- Cursor has, at this point, raised $3.36 billion, and turned it into, at best, about a billion dollars of revenue, and that’s assuming it linearly grew between periods versus (more likely) having up and down months.

I’ll get to Cursor in a little bit, because it’s crucial to the Subprime AI Crisis.

What Is The Subprime AI Crisis?

The Subprime AI Crisis is what happens when somebody actually needs to start making money, or, put another way, stop losing quite so much, revealing how every link in the chain was funded based on questionable assumptions and deadly short-term thinking.

Here’s the order of events as I see them.

- As AI labs grow, their costs increase dramatically, both in their immediate compute costs and the demands from GPU providers for up-front cash to secure future compute allocation.

- In parallel, as AI startups grow, they burn more money per customer, which increases their dependence on venture capital.

- As this happens, AI labs are facing both a cash and compute crush, which means they have to start either controlling the amount of compute customers use or make more money from serving it.

- AI labs are thus forced to raise prices on AI startups, either through tolls (priority processing) or raw cost increases.

- Another important detail: one of the ways that AI labs raise prices isn’t even through “making things more expensive,” but selling access to models that burn more tokens. Think of this as the variable rate mortgage of the Subprime AI Crisis.

- As AI labs raise prices on their AI startup clients, these startups are forced to reduce the quality of their services and/or increase their costs after years of getting their customers used to a significantly-cheaper or better service, which makes their products less attractive, leading to customer churn.

- Worse still, these customers are used to using subscriptions from Anthropic and OpenAI with remarkable rate limits that are impossible for even a well-capitalized AI startup to compete with, which means that these changes only slow the rate of burn rather than making these companies profitable.

- As a result, these AI startups are more dependent on venture capital.

- While OpenAI and Anthropic are pretty happy on the top of the food chain, they are also dependent on the existence of AI startups for revenue for their models, which means that while these price changes increase the amount of revenue they get in the short term, they invariably push AI startups toward cheaper open source models and death.

- AI labs have, this entire time, been massively subsidizing their own products. Per Forbes, AI coding platform Cursor has faced numerous problems competing with Anthropic, who it claims at one point let users burn $5000 a month in tokens on a $200-a-month subscription, which reflects my own reporting from last year. Cursor also claims in the same article that its enterprise customers are profitable, but I call bullshit considering the multiple enterprise customers who have reached out to tell me they can burn $2 or $3 for every $1 of subscription.

- The problem is that a subsidy is always a losing proposition, which means that at some point Anthropic and OpenAI will have to massively reduce the amount of tokens that people use on their accounts.

- As I’ll get to later, this infuriates users and sends them running for the doors.

- At some point, the cost of doing business with Anthropic and OpenAI will kill AI startups, as there is no point at which any of them become sustainable, which will in turn kill the revenue from selling access to their models.

- At some point, users will be forced to burn tokens at a rate that actually matches their subscription costs, which will reduce the value of the product, which will in turn reduce the amount of subscribers they will have.

- And at some point, Anthropic and OpenAI will be left with a bunch of compute reservations they’ve made that they don’t need and can’t afford due to miss-timed growth projections. As Dario Amodei said back in February, there’s no hedge on Earth that could stop Anthropic from going bankrupt if it buys too much compute.

- As the two largest customers of AI compute — there really isn’t even a distant third outside of xAI and hyperscalers, the latter of which are predominantly standing up OpenAI and Anthropic (or in Meta’s case a bunch of unprofitable LLM bullshit) — who’s going to pay for all of those data centers? Fucking Aquaman?

The entire generative AI industry is based on unprofitable, unsustainable economics, rationalized and funded by venture capitalists and bankers speculating on the theoretical value of Large Language Model-based services. This naturally incentivized developers to price their subscriptions at rates that attracted users rather than reflecting the actual economics of the services.

Sidenote: This is what worked in the past, if you squint hard enough. In reality, there are no historical comparisons to the AI bubble’s economics in the entire history of tech — no business has been this bad, no software has ever cost this much, and no solution exists other than charging prices that are 10x higher or reducing rate limits to the point that users want to kill you.

Venture capitalists are also part of the subprime AI crisis, sitting on “billions of dollars” of AI companies that lose hundreds of millions of dollars, their companies built on top of AI models owned by OpenAI and Anthropic with little differentiation and no path to profitability. Nobody is going public! Nobody is getting acquired! As I discussed back in AI Is A Money Trap, there really is no liquidity mechanism for the billions of dollars sunk into most AI companies. Going public also reveals the ugly financial condition of these startups. MiniMax, for example, made a pathetic $79 million in revenue in 2025, and somehow lost $250.9 million in the process.

Much like the houses in the great financial crisis, AI startups only retain their value as long as there is a market, or at least the perception that these companies could theoretically go public or be acquired. It only takes one failed exit or firesale to break the illusion.

At least you can live in a house. Every AI company will be a problem child that burns money on inference, bereft of intellectual property thanks to their dependence on OpenAI and Anthropic. What use is Perplexity without an eternal subsidy? The value of having Aravind Srivinas sitting around your office all day? I’d rather start my car in the garage.

“Fast-growing” AI companies only grew because they were allowed to burn as much money as they wanted selling services that are entirely unsustainable, raising more venture capital money with every burst of user growth, which they use to aggressively market to new users and grow further to raise another bump of venture capital.

As a result, AI labs and AI startups have created negative habits with their users in two ways:

- Users are inherently trained to expect a service that they pay for on a monthly basis, and their experience of said service is entirely separated from “token burn,” making it impractical to impossible to get them to use models directly, or to apply rate limits.

- The longer a user has used the service, the more their habits orient around an “unlimited” or “partially limited” service, which means your only options are to raise prices or apply rate limits, with the only justification for either of them being “new models” (which are more expensive) or “we’re unable to afford to run our company,” which the user doesn’t give a shit about.

To grow their user bases as fast as possible, AI startups (and AI labs) allowed their users to burn incredible amounts of tokens, I assume because they believed at some point things would become profitable or they’d always have access to easy venture capital. This created an entire industry of AI startups that disconnected their users from the raw economics of the product, creating a race to the bottom where every single AI startup must have every AI model and every AI feature and do every AI thing, all at an incredible cost that only ever seems to increase.

Another fun feature is that just about every product gives some sort of “free” access period for new (and expensive!) models, like when Cursor had a free access period for GPT 5’s launch. It’s unclear who shoulders the burden here, but somebody is paying those costs.

In any case, nowhere are the subsidies higher than those of Anthropic and OpenAI, who use their tens of billions of dollars of funding to allow users to burn anywhere from $3 to $13 per every dollar of subscription revenue to outpace their competition.

The Subprime AI Crisis is when the largest parties are finally forced to reckon with their rotten economics, and the downstream consequences that follow.

The Subprime AI Crisis Began In June 2025, When Anthropic and OpenAI Launch “Priority Service Tiers,” Jacking Up The Prices on AI Startups like Cursor, Augment Code, Replit and Lovable

As I reported in July 2025, starting in June last year, both OpenAI and Anthropic launched “priority service tiers,” jacking up the price on their enterprise customers (who pay for model access via their API to provide models in their software) for guaranteed uptime and less throttling of their services while also requiring an up-front (3-12 month) guarantee of token throughput.

Anthropic’s changes immediately increased the costs on AI startups like Lovable, Replit, Augment Code, and Anthropic’s largest customer, Cursor, which was forced to dramatically change its pricing from a per-request model to a bizarre pricing model where you pay model pricing with a 20% fee, but also receive A) at least as much as you pay in your subscription fee in tokens and B) “generous included usage” of Cursor’s Composer model:

What’s crazy is that even with this pricing, Cursor still gives away 16 cents for every dollar on its $60-a-month plan and $1 for every dollar on its $200-a-month plan, and that’s before “generous usage” of other models.

I’ll also add that Anthropic has already turned the screws on its subscription customers too, adding weekly limits to Claude subscribers on July 28, 2025, a few weeks after quietly tightening other limits.

Over the next few months, just about every AI startup had to institute some form of austerity. Replit shifted to something called “effort-based” pricing in June 2025, and then launched something called “Agent 3” in September 2025 that burned through users’ limits even faster — and, to be clear, Replit’s pricing gives you your subscription price in credits every single month on top of the cloud hosting necessary to get them online, meaning that a $20-a-month subscriber likely burns at least $25 a month, and Replit remains unprofitable.

Coding platform Augment Code was forced to change its pricing in October 2025 on a per-message basis, which meant that any message you sent cost the same amount no matter how complex the required response. In one case, a user spent $15,000 in tokens on a $250-a-month plan. Since then, Augment Code has moved to a confusing “credit” based model where they claim you use about 293 credits per Claude Sonnet 4.5 task, and users absolutely hate it because Augment Code was too cowardly to charge users based on the actual model pricing, because doing so would scare them away.

Now Augment Code is planning to remove its auto-complete and next edit features, claiming that their global usage was in decline and saying that developers “...are no longer working primarily at the level of individual lines of code; instead, they are orchestrating fleets of agents across tasks.”

Elsewhere, Notion bumped its Business Plan from $15 to $20-a-month per user thanks to its new “AI features,” which I imagine sucked for previous business subscribers who didn’t want “AI agents” or any of that crap but did want things like Single Sign On and Premium Integrations. The result? Profit margins dropped by 10%. Great job everybody!

In February 2026, Perplexity users noticed that rate limits had been aggressively trimmed from even its January 2026 limits, with $20-a-month subscribers now limited to arbitrary “average use weekly limits” on searches, and “monthly limits” on research queries (that one user worked out dropped them from 600 deep research queries a month to 20), down from 300+ searches a day and generous deep research limits.

Price hikes and product changes are likely to accelerate in the next few months as things get desperate. But now for a quick intermission…

I Will Fucking Piledrive You If You Bring Up Uber or Amazon Web Services Again (And Several Other Myths)

I have been training with with Nik Suresh, author of I Will Fucking Piledrive You If You Mention AI Again, and while I’m kidding, I want to be clear that if you don’t stop bringing up Uber and AWS as examples of why AI will work out I may react poorly as I’m fucking tired of this point because it’s stupid and wrong. I will put you in the embrace of God, I swear.

The AI bubble and its representative companies do not and have never represented the buildout of Amazon Web Services or the growth and burnrate of Uber. If you are still saying this you are wrong, ignorant and potentially a big fucking liar.

As I discussed about a month ago, Amazon Web Services cost around $52 billion (adjusted for inflation!) between 2003 (when it was first used internally) through two years after it hit profitability (2017). OpenAI raised $42 billion last year. Anthropic raised $30 billion in February. You are full of shit if you keep saying this.

As I discussed a few weeks ago, Uber’s economics are absolutely nothing like generative AI. Uber did not have capex, and burned those billions on R&D and marketing (making it more similar to Groupon in the end):

“But Ed, What About Uber?”

What about Uber? Uber is a completely different business to Anthropic and OpenAI or any other AI company. It lost about $30 billion in the last decade or so, and turned a weird kind of profitable through a combination of cutting multiple markets and business lines (EG: autonomous cars), all while gouging customers and paying drivers less.

The economics are also completely different. Uber does not pay for its drivers’ gas, nor their cars, nor does it own any vehicles. Its PP&E has been between $1.5 billion and $2.1 billion since it was founded. Uber’s revenue does not increase with acquisitions of PP&E, nor does its business become significantly more expensive based on how far a driver drives, how many passengers they might have in a day, or how many meals they might deliver. Uber is, effectively, a digital marketplace for getting stuff or people moved from one place to another, and its losses are attributed to the constant need to market itself to customers for fear that other rideshare (Lyft) or delivery companies (DoorDash, Seamless) might take its cash.

Also: Uber’s primary business model was on a ride-by-ride basis, not a monthly subscription. Users may have been paying less, but they were still thinking about each transaction with Uber in terms that made sense when prices were raised (though it briefly tried an unlimited ride pass option in 2016).

Here’re some other myths I’m tired of hearing about:

- They’re profitable on inference- no they are not! There is no proof of this statement anywhere! What’s your source here? Sam Altman saying it in August 2025? Dario Amodei saying he had gross margins of 50%? That was a “stylized fact” that he specifically said wasn’t about Anthropic, not that you care!

- What else have you got for me here? SemiAnalysis’ InferenceX? Gun to your head, explain to me how that’s the case.

- Oh you’ve heard the companies do “batch processing”? Why is all that “batch processing” not making them profitable?

- I swear to god if you say any shit about how these companies would be “profitable without training” I’m going to scream. No! AI training costs are not going away. They are an inherent part of running these companies, and are not capex. They are operating expenses.

- AI is being funded by the largest companies in the world with the most healthy balance sheets- I will obliterate you with the 100-Type Guanyin Bodhisattva! Microsoft is the only remaining hyperscaler that is funding the AI buildout without debt, and none of them will talk about AI revenues. This point is trotted out by imbeciles to try and say “this is nothing like the dot com bubble,” which I fundamentally agree with — it’s worse! It’s weirder! It’s a bigger waste! And they collectively need $2 trillion in brand new AI revenues by 2030 for any of it to make sense!

- The cost of AI services is going down because the token prices are going down- you are a silly person! You do not actually understand anything! The cost of tokens is not the same as the cost of serving tokens! OpenAI cut the cost of its o3 reasoning model by 80% a few weeks after the release of Claude Opus 4. Do you think that happened because of magical price reductions on the ops side? If so, I wish to study your brain.

- It’s the gym model they want people to subscribe and not use it it’s the gym model it’s the gym model- TZZZZT, whoops, looks like you got tazed.

Yet the most obvious one that I hear is the funniest: that Anthropic and OpenAI can just raise their prices!

March 2026 — The Subprime AI Crisis Comes For Anthropic’s Subscribers As It Rugpulls Subscribers On The Road To IPO

As both OpenAI and Anthropic aggressively stumble toward their respective attempts to take their awful businesses public, both are making moves to try and become “respectable businesses,” by which I mean “businesses that still lose billions of dollars but in less-annoying ways.” Last week, OpenAI killed Sora — both the app and the model — along with a $1 billion investment from Disney, with the Wall Street Journal reporting it was burning a million dollars a day, but Forbes estimating the number was closer to $15 million.

OpenAI will frame this as part of its "refocus" on a “Superapp” (per the WSJ) that combines ChatGPT, coding app codex, and its dangerously shit browser into one rat king of LLM toys that nobody can work out a real business model for. All of this is part of a supposed internal effort to “prioritize coding and business customers” that we’ve heard some version of for months. Meanwhile, OpenAI’s attempts to bring advertising to its users have been a little embarrassing, with a two-month-long trial involving “less than 20%” of ChatGPT users resulting in “$100 million in annualized revenue,” better known as about $8.3 million in a month from what was meant to be a business line that brought in “low billions” in 2026 according to the Financial Times.

Timing confusingly with this “refocus” is OpenAI’s plan to nearly double its workforce from 4,500 to 8,000 people by the end of 2026. In fact, writing all this down makes it feel like OpenAI doesn’t really have much of a focus beyond “buy more stuff” and saying “superapp!” every six months. Hey, whatever happened to OpenAI’s plan to be “the interface to the internet” that Alex Heath reported would happen by the first half of 2025? Did that happen? Did I miss it?

In any case, OpenAI’s other strategy is to absolutely jam the gas pedal on its Codex coding product — for example, one user I found was able to burn $2,192 in tokens on a $200-a-month ChatGPT plan, and another was able to burn $1,461 in three days on the same subscription.

Meanwhile, Anthropic has been in the midst of a months-long rugpull following an all-out media campaign through December and January, pushing Claude Code on tech and business reporters who don’t bother to think too hard about things, per my Hater’s Guide to Anthropic:

On December 3 2025, the Financial Times would report that an Anthropic IPO would be happening as soon as 2026, while also revealing that the company was already working on another funding round valuing it at $300 billion.

Around this point, something strange started happening. Posts started appearing claiming that Claude Code was the best thing ever. Software development was “now boring” because of how good it was. Even Dario Amodei, a person directly incentivized to lie about it, said that an indeterminate number of coders at Anthropic no longer wrote any code. Even the creator of Claude Code said it did all his coding. One blogger said it was getting “too good.” Twitter flooded with obtuse stories about how Claude Code was doing all the work and they were scared about how good it was making them, all without really explaining what that meant.

In the last week of December, Anthropic would push a promotion doubling the rate limits on all of its monthly plans from December 25 to December 31, 2025.

By January 5, 2026, users were complaining about punishing new rate limits, with one user claiming that there had been a 60% reduction in token usage. Anthropic claimed that this was simply the expiration of holiday rate limits, but in reality, this is all part of Anthropic’s continual manipulation of rate limits to con customers into buying Claude subscriptions that decay in value.

In the end, Anthropic got what it wanted. The Verge would claim that Claude Code was “having a moment,” with word-of-mouth exposure spiking by 13% points compared to the prior 30-day period between December 29th and January 26th, likely because of all the fucking media coverage and astroturfing. Despite there not really being a thing that anybody could point at, Claude Code was apparently the biggest thing ever, terrifying competitors and changing lives in some indeterminate way that was very cool, possibly.

The media campaign worked, and Anthropic was able to close a $30 billion round on February 12, 2026.

On February 18, 2026, Anthropic started banning anybody who used multiple Claude Max accounts, something that had never been an issue before it needed everybody to talk about Claude Code non-stop. The same day, Anthropic “cleared up” its Claude Code policies, saying that you can’t connect your Claude account to external services, meaning that all of those people who have been spinning up OpenClaw instances and buying $10,000 worth of Mac Minis are going to find that they’re suddenly having to pay for their API calls.

Around a month later, Anthropic would start a two-week-long 2x-rate limit promotion for off-peak usage that ended on March 27, 2026.

A day before on March 26 2026, Anthropic would announce that it was starting “peak hours,” with Claude users maxing out their sessions faster between the hours of 5am and 11PM pacific time Monday to Friday, with a spokesperson limply adding that “efficiency wins” will “offset this” and only “7% of users will hit the limits.” All of this was sold as a result of “managing the growing demand for Claude.”

If I’m honest, this might be Anthropic’s most-egregious swindle yet. By pumping off-peak usage and then immediately cutting it just before introducing peak hours, Anthropic further muddies the water of how much actual access you get to their products. Peak hours appear to have become aggressively restricted, and I imagine off peak feels…something like the regular peak hours used to.

Users almost immediately started hitting limits regardless of what time or day they were using it.

One user on the $100-a-month Max plan complained about hitting 61% of his session limit after four prompts (which cost $10.26 in tokens). Another said that they hit 63% of their rate limit on their $200-a-month plan in the space of a day, and another hit 95% after 20 minutes of using their Max plan (I’m gonna guess $100-a-month). This person hit their Max limit after “two or three things.” This one vowed to cancel their $200-a-month subscription after hitting their weekly limit in the space of a day, saying that they (and I’m going off of a translation, so forgive me) “expected a premium experience for $200, and what they got was constant limit stress.” This guy is scared to use Claude Code because of the limits. This guy blew 28% of his limits in less than an hour. This guy “can’t even do basic work on a 20x Max plan.” This guy hit his limits “in a few prompts” on Anthropic’s $20-a-month Pro plan, and the same prompts would have (apparently) consumed 5% of the limits “normally” (I assume last week), and while Thariq from Anthropic assured him that this was abnormal, he didn’t bother to respond to this guy in the thread who said he ran out of usage on the Max plan in 15 minutes.

While Anthropic Technical Staff Member Lydia Hallie posted that Anthropic was “aware people are hitting usage limits in Claude Code way faster than expected” and that some investigation was taking place, it’s hard to imagine that Anthropic had no idea that these limits were so severe or that any of this was a surprise.

Naturally, OpenAI had already reset limits on its Codex coding model the second that these reports begun, claiming that they “wanted people to experiment with the magnificent plugins they launched” rather than saying something more-truthful like “we’re lowering limits so that the hogs braying with anger at Anthropic start paying OpenAI instead.”

While an eager Redditor claimed that these rate limits were a result of a cache bug on Claude Code, Anthropic quickly said that this wasn’t the reason, nor did they say anything about there being a reason or that anything was wrong.

Meanwhile, users are complaining about the reduced quality of outputs from its Claude Opus 4.6 model, with some saying it acts like cheaper models, and another noting that it might be because of Anthropic’s upcoming Mythos model, which was leaked when Fortune mysteriously somehow discovered an openly-accessible “data cache” that included 3000 assets but somehow no actual information about the model other than it would be a “step change” and its cybersecurity powers were too much to release at once, the tech equivalent of deliberately dropping a magnum condom out of your wallet in front of a woman, or Dril’s “I was just buying ear medication for my sick uncle…who’s a model by the way” post.

I’m gonna be honest I just don’t give a shit about Mythos or Capybara or any blatant leaks intended to spook cybersecurity stocks, especially as these models are also meant to be much more compute-intensive, and thus, vastly more expensive to run.

How will that work with these rate limits, exactly?

I think there’re a few ways this goes:

- Anthropic will announce that it’s “fixed the bug” (IE: eased rate limits it intentionally set) and apologize to the community, prolonging the inevitable. Rate limits will continue to decay over time, just at a slower pace.

- Anthropic keeps the limits where they are, and we hit a new normal that makes everybody really mad.

I wager that this is just the first of a few major belt-tightening operations from both Anthropic and OpenAI as they desperately shoulder-barge each other to file the world’s worst S-1. Both companies lose billions of dollars, both companies have no path to profitability, and both companies sell products — both to consumers and businesses — that simply do not work when users are forced to pay something approaching a sustainable cost.

Even with these egregious limits, a user I previously linked to was allowed to burn $10 in tokens in four prompts on a $100-a-month plan. Even in the world of Amodei’s Stylized Facts, that would still be $5 of prompts every 5 hours, which over the course of a month will absolutely be over $100.

Yet the sheer fury of Anthropic’s customers only proves the fundamental weakness of Anthropic’s business model, and the impossibility of ever finding any kind of profitability.

And the AI industry has nobody to blame but itself.

Anthropic and OpenAI (and Other AI Startups) Have Trained Their Users To Use Their Unsustainable Products In Unsustainable Ways, And Their Users Are Intolerant of Rate Limits and Price Increases

While it’s really easy to make fun of people obsessed with LLMs, I want to be clear that Anthropic and OpenAI are inherently abusive companies that have built businesses on theft, deception and exploitation.

Anybody who’s spent more than a few minutes in one of the many AI Subreddits has read story after story of models mysteriously “becoming dumb,” or rate limits that seem to expand and contract at random. Even the concept of “rate limits” only serves to further deceive the customer. Outside of intentionally asking the model, users are entirely unaware of their “token burn,” or at the very least have built habits around rate limits that, as of right now, are entirely different to even a month ago.

A user who bought a $200-a-month Claude Pro subscription in December 2025, a mere three months later, now very likely cannot do the same things they did on Claude Code when they decided to subscribe, and those who use these subscriptions for their day jobs are now having to sit on their hands waiting for the rate limits to pass, and have no clarity into whether they’ll be able to work at the same rate they did even a month ago, let alone when they subscribed.

All of this is a direct result of Anthropic, OpenAI, and other AI startups intentionally deceiving customers through obtuse pricing so that people would subscribe believing that the product would continue providing the same value, and I’d argue that annual subscriptions to these services amount to, if not fraud, a level of consumer deception that deserves legal action and regulatory involvement.

To be clear, no AI company should have ever sold a monthly subscription, as there was never a point at which the economics made sense. Yet had these companies actually charged their real costs, nobody would have bothered with AI, because even with these highly-subsidized subscriptions, AI still hasn’t delivered meaningful productivity benefits, other than a legion of people who email me saying “it’s changed my life as a programmer!” without explaining to me what that means or why it matters or what the actual result is at the end.

Isn’t it kind of weird that we have these LLM subscriptions to products that arbitrarily become less-accessible or less-performant in a way that’s impossible to really measure, and labs never seem to address? We don’t know the actual rate limits on Claude (other than via CCusage or Shellac’s research), or ChatGPT, or any of these products by design, because if we did, it would be blatantly obvious how unsustainable and ridiculous these products were.

And the magical part about Large Language Models is that your most engaged customers are also your most-expensive, and the more-intensive the work, the more expensive the outputs become.

If you’re about to say “well they’ll just raise the prices,” perhaps you should check Twitter or Reddit, and notice that Anthropic’s customers are screaming like they’re being stung to death by bees because of new rate limits that only let them burn $10 of compute in five hours. Do you think these people would be comfortable with a $130-a-month, $1,300-a-month or $2,500-a-month subscription? One that performs the same way (if not worse) as their $20, $100 or $200-a-month subscription did?

Or do you think they’ll do Aaron Sorkin speeches about Anthropic’s greed and immediately jump to ChatGPT in the hopes that the exact same thing doesn’t happen a few months later?

Much as homeowners were assured that they’d simply be able to refinance their homes before the adjustable rates hit, AI fans repeatedly switch subscriptions to whichever provider is currently offering the best deal, in some cases paying for multiple subscriptions under the explicit knowledge that rate limits existed and would become increasingly-punishing.

Based on the reactions of their users, I don’t really see how the AI labs — or AI startups, for that matter — fix this problem.

On one hand, AI subscribers are acting like babies, crying that their product won’t let them use $2500 of tokens for $200. This was an obvious con, a blatant subsidy, and a party that wouldn’t last forever.

On the other, AI labs and AI startups have never, ever acted with any degree of honesty or clarity with regards to their costs, instead choosing to add “exciting” new features that often burn more tokens without charging the end user more, which sounds nice until you remember that things cost money and money is not unlimited.

The very foundation of every AI startup is economically broken. The majority of them sell some sort of “deep research” report feature that costs several dollars to generate at a time, and many sell some form of expensive coding or “computer use” product, tool-based web search features, and many other products that exist to keep a user engaged while burning tokens, all without explaining to the user “yeah, we’re spending way more than we make off of you, this is an introductory rate.”

This intentional, blatant and industry-wide deception set the terms for the Subprime AI Crisis. By selling AI services at $20 or $50 or even $200-a-month, AI startups and labs created the terms for their own destruction, with users trained for years to expect relatively unlimited access sold at a flat rate for a service powered by Large Language Models that burn tokens at arbitrary rates based on their inference of the user’s prompt, making costs near-impossible to moderate.

And when these companies make changes to slightly bring costs under control, their users act with revulsion, because rate limits aren’t price increases, but direct changes to the functionality of the product. Imagine if a subscription to a car service was $200-a-month, and let you go 50 miles, or 25 miles, or 100 miles, or 4 miles, or 12 miles depending on the day, and never at any point told you how many miles you had left beyond a percentage-based rate limit. To make matters worse, sometimes the car would arbitrarily take a different route, driving you five miles in the opposite direction, or decide to park on the side of the curb, charging you for every mile.

This is the reality of using an AI product in the year of our lord 2026. A Claude Code or OpenAI Codex user cannot with any clarity say that in three months their current workload or workflow will be possible based on their current subscription. Somebody buying an annual subscription to any AI product is immediately sacrificing themselves to the whims of startup CEOs that intentionally decided to deceive users for years as a means of juicing growth.

And when these limits decay, does it eventually make the ways in which some of these users work with Claude Code impossible? At what point do these rate limit shifts start changing how reliable the experience is and how much one can get done in a day? What use is a tool that gets more unreliable to access and expensive over time? Even if this week’s rate limits are an overcorrection, one has to imagine they resemble the future of Anthropic’s products, and are indicative of a larger pattern of decay in the value of its subscriptions.

I’m going to be as blunt as possible: every bit of AI demand — and barely $65 billion of it existed in 2025 — that exists only exists due to subsidies, and if these companies were to charge a sustainable rate, said demand would evaporate.

There is no righting this ship. There is no pricing that makes sense that customers will pay at scale, nor is there a magical technological breakthrough waiting in the wings that will reduce costs. Vera Rubin will not save AI, nor will some sort of “too big to fail” scenario, because “too big to fail” was based on the fact that banks would have stopped providing dollars to people and insurance companies would have stopped issuing insurance.

Despite NVIDIA’s load-bearing valuation and the constant discussion of companies like OpenAI and Anthropic, their actual economic footprint is quite small in comparison to the trillions of dollars of CDOs and trillion plus dollars of mortgages involved in the great financial crisis. The death of the AI industry would be cataclysmic to venture capitalists, bring about the end of the hypergrowth era for the Magnificent Seven, and may very well kill Oracle, but — seriously — that is nothing in comparison to the scale of the Great Financial Crisis. This isn’t me minimizing the chaos to follow, but trying to express how thoroughly fucked everything was in 2008.

On Friday I’m going to get into this more in the premium. This wasn’t an intentional ad, I just realized as I wrote that sentence that that was what I have to do.

Anyway, I’ll close with a grim thought.

What’s funny about the comparison to the subprime mortgage crisis is that there are, in all honesty, multiple different versions of the Stripper With Five Houses from The Big Short:

- The AI companies that only have customers because they spend $3 to $10 for every dollar of revenue.

- The venture capitalists that are ultra-rich on paper, heavily leveraging their firms in companies like Harvey (worth “$11 billion”) and Cursor (worth “$29.3 billion”) that burn hundreds of millions or billions of dollars and are now both too large to sell to another company and too shitty a company to take public.

- The AI labs that have built massive businesses on selling heavily-subsidized subscriptions to customers who don’t want to pay for them and API calls to AI startups that can only pay them if infinite resources exist.

- The AI data center companies that, thanks to readily-available debt, have started 200GW of projects (and only started building 5GW of them) for AI demand that doesn’t exist, entirely based on the theoretical sense that maybe it will in the future.

- Oracle, who is building hundreds of billions of dollars of data centers for OpenAI (which needs infinite resources to be able to pay its compute costs), is taking on equally-large amounts of debt, all because it assumes that nothing bad will ever happen.

- The customers of AI startups that are building lifestyles, identities and workflows around them believing that we’re “just at the beginning” on top of unsustainable AI subscriptions.

All of these entities are acting based on a misplaced belief that the world will cater to them, and that nothing will ever change. While there might be different levels of cynicism — people that know there’re subsidies but assume they’ll be fine once they arrive, or people like Sam Altman that are already rich and don’t give a shit — I think everybody in the AI industry has deluded themselves into believing they have the mandate of Heaven.

The Pale Horses Return

Back in August 2024, I named several pale horses of the AIpocalypse, and after absolutely fucking nailing the call two years early on OpenAI’s “big, stupid magic trick” of launching Sora to the public, I think it’s time to update them:

- Any further price increases or service degradations from Anthropic and OpenAI are a sign that they’re running low on cash.

- Any reduction in capex from big tech is a sign that the AI bubble is bursting, as NVIDIA’s continued growth only comes from Microsoft, Google, Amazon, Meta, Oracle and other large companies buying tens of billions of dollars of servers from Taiwanese ODMs like Foxconn and Quanta.

- Any further price increases or service degradations from AI startups, such as Cursor, Perplexity, Harvey, Lovable or Replit. These are all token-intensive venture-hogs that burn $4 or $5 for every $1 of revenue.

- Any discussion of layoffs at AI companies.

- The collapse of a data center deal that has yet to commence construction.

- The collapse of a data center already in construction, but before it’s finished.

- The collapse of an already-constructed data center.

- CoreWeave or any major data center player having trouble or failing to raise debt. We’ve already seen the beginnings of this with CoreWeave’s issues raising for its Lancaster PA data center.

- The Further Collapse of Stargate Abilene: If anything happens to the construction of OpenAI’s flagship data center (being built by Oracle) in Abilene Texas, you know shit is getting bad.

- Any problems or delays with OpenAI or Anthropic going public: both of these companies are the financial equivalent of Chernobyl, so I can only imagine it’ll take some talented accountants to get them in any shape where investors without lead poisoning actually want to get involved.

- Any problems with Blue Owl as an ongoing concern: Blue Owl is the loosest lender in the AI bubble, and if it falls behind on their loans or has issues with its limited partners, that’s a bad sign too.

- Any problems with SoftBank: SoftBank was somehow able to raise $40 billion in debt (payable in a year) to fund its chunk of OpenAI’s pseudo-$110 billion round, running over its promised 25% ratio of loans to the value of its assets. This puts SoftBank in a very precarious position.

- ARM’s stock tanking: A great deal of SoftBank’s wealth comes from its investment in ARM, including a $15 billion margin loan based on its stock. If ARM drops below $80, things are going to get hairy for Masayoshi Son.

- Any issues with NVIDIA’s customers’ ability to pay: If NVIDIA’s customers don’t reliably pay it, things will look bad come earnings season.

- NVIDIA misses on earnings: This is an obvious one, but I think the markets will crap their pants if NVIDIA misses on earnings estimates.

Anyway, thanks for reading this piece.